I often find myself marveling at the sheer processing power packed into the devices we use every day. From our smartphones to the supercomputers mapping the cosmos, silicon microchips have been the bedrock of this digital revolution for over half a century. But recently, I started thinking about the future, about the inevitable limits of even the most ingenious engineering. We've pushed electrons through silicon to incredible speeds, but what happens when we hit a wall – a physical, thermal, and energetic barrier that silicon just can't breach?

This isn't a hypothetical distant future; it's a challenge we're facing right now. As chips get denser, they generate more heat, consume more power, and become increasingly difficult to manufacture. It's a fundamental problem that has scientists and engineers scrambling for the next big leap. What if the answer isn't about pushing electrons harder, but about replacing them entirely? What if the future of computing doesn't run on electricity, but on *light*?

### The Silicon Ceiling: A Looming Challenge

For decades, **Moore's Law** has been our guiding star in computing, dictating that the number of transistors on a microchip roughly doubles every two years. This relentless miniaturization has given us exponentially faster and more powerful devices. However, this elegant progression is starting to falter. The physical limits of silicon are becoming undeniable.

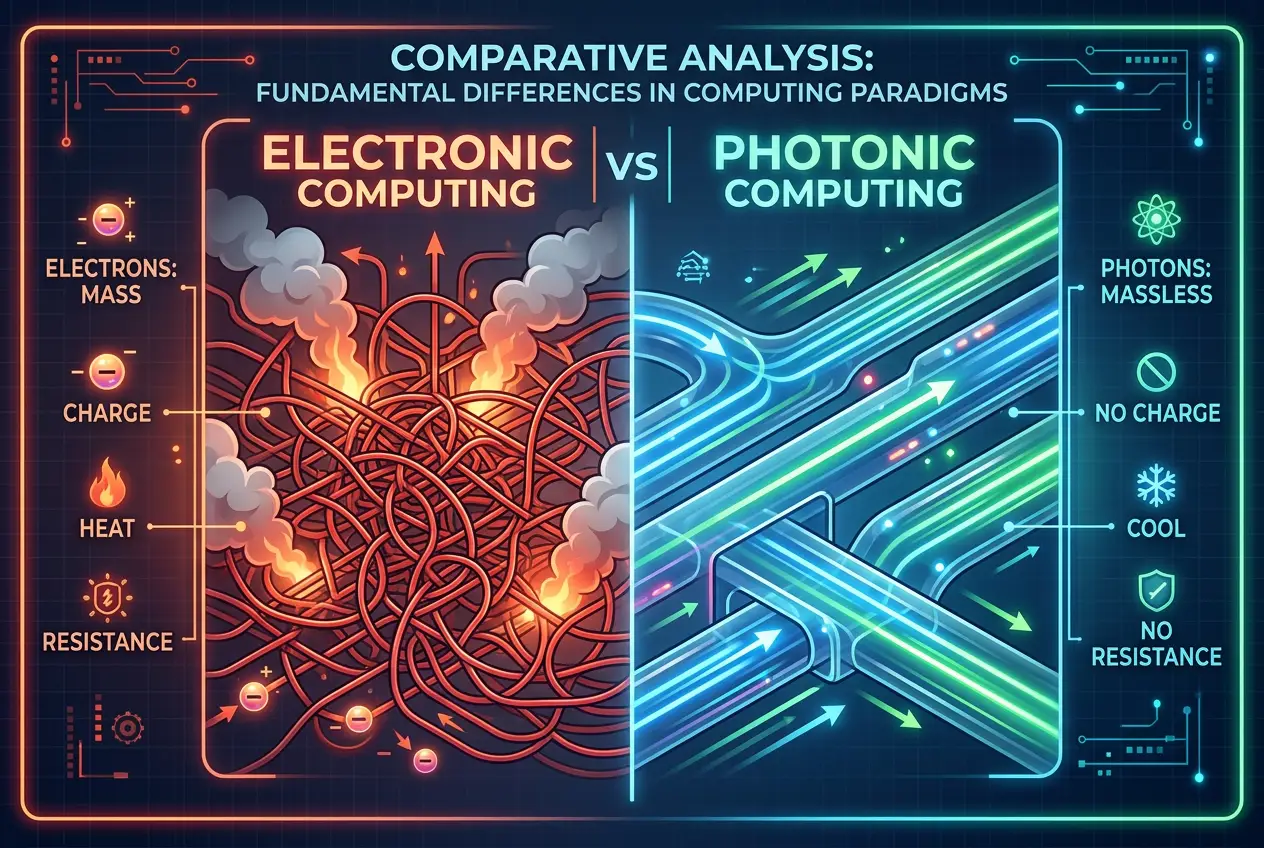

Think about it: electrons, while tiny, still have mass and charge. When they move through the microscopic pathways of a chip, they encounter resistance, generating heat. This heat is the enemy of efficiency and speed. It limits how tightly we can pack components, how fast we can clock them, and how much energy we have to expend just to keep them cool. As transistors shrink to atomic scales, quantum effects also begin to interfere with their predictable behavior, making them less reliable.

This isn't to say silicon is obsolete; far from it. It will continue to power the vast majority of our technology for the foreseeable future. But to achieve the next monumental leaps in computing – the kind needed for advanced AI, quantum simulations, or tackling truly "big data" problems – we need a new paradigm. This is where the elegant, massless photon steps into the spotlight.

### Enter Photonics: Computing with Light

Imagine a computer where information isn't carried by the flow of electrons, but by pulses of light. This is the core concept behind **photonic computing**, also known as optical computing. Instead of electrical signals, light signals (photons) travel through optical waveguides, which are essentially tiny "light pipes" etched onto a chip.

The shift from electrons to photons offers several tantalizing advantages:

* **Speed:** Light is the fastest thing in the universe. While the speed of light within a material like silicon isn't quite the vacuum speed, it's still significantly faster than electrons moving through electrical conductors. More importantly, photons experience far less resistance and scattering, allowing for much quicker data transmission over short and long distances.

* **Energy Efficiency:** Less resistance means less heat. Photonic components generate significantly less heat than their electronic counterparts, leading to massive energy savings and greatly reduced cooling requirements. This is a game-changer for large data centers that consume enormous amounts of power.

* **Bandwidth:** One of the most compelling features of light is its ability to carry multiple streams of data simultaneously using different wavelengths or colors – a technique called **wavelength-division multiplexing (WDM)**. Think of it like a fiber optic cable carrying hundreds of different conversations on distinct light channels, all at once. Electrons, in contrast, typically occupy a single "lane."

* **Reduced Crosstalk:** Electrons can interfere with each other when pathways are packed too closely, causing errors. Photons, however, are far less prone to interference. They can pass through each other without interaction, enabling denser integration and more complex circuitry without signal degradation.

### How Does Photonic Computing Actually Work?

At its heart, photonic computing leverages the properties of light to perform computational tasks. Instead of transistors that switch electron flows, we use optical switches that redirect or modulate light beams.

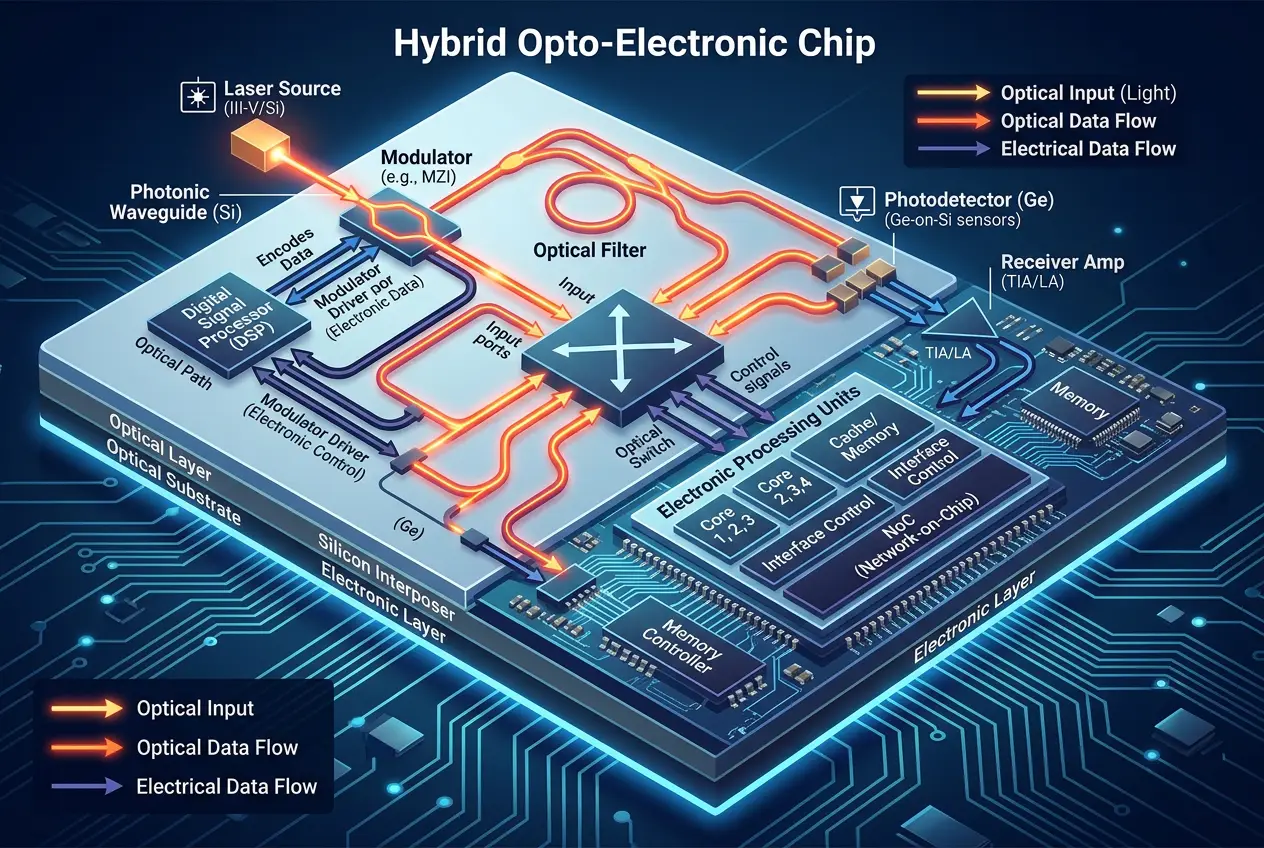

1. **Light Generation:** Lasers, often semiconductor lasers, are used to generate coherent light signals.

2. **Waveguides:** These light signals are guided through microscopic channels on a chip, acting as the "wires" of the optical circuit. These are typically made from materials like silicon nitride or silicon itself, which can effectively confine and direct light.

3. **Modulation:** Information is encoded onto the light beam by changing its intensity, phase, or polarization. Devices called modulators convert electrical signals (input) into optical signals.

4. **Optical Switches & Gates:** These components manipulate the light signals. For example, an optical switch can redirect a light beam based on another control light beam, effectively performing a logical operation. Researchers are developing integrated photonic circuits that contain all these elements on a single chip. For more detail on integrated photonics, you can refer to its [Wikipedia page](https://en.wikipedia.org/wiki/Integrated_optics).

5. **Detection:** At the output, photodetectors convert the optical signals back into electrical signals that can be read by conventional electronic circuits.

This might sound like a purely optical system, but most current research focuses on **opto-electronic hybrid systems**. This means combining the best of both worlds: using electronics for complex processing and memory (where electrons still excel for stability and density), and photonics for high-speed data transfer and inter-chip communication.

I've learned that a key component enabling this is the silicon photonics platform, which allows optical components to be fabricated using existing semiconductor manufacturing techniques, making integration with electronic circuits more feasible.

### Applications on the Horizon

The potential applications of photonic computing are immense, particularly in areas where speed and bandwidth are paramount:

* **Data Centers:** With the explosion of cloud computing and AI, data centers are constantly battling energy consumption and latency. Photonic interconnects within and between servers can drastically reduce power usage and speed up data transfer, making them significantly more efficient.

* **Artificial Intelligence and Machine Learning:** Training complex AI models requires immense computational power and data movement. Photonic accelerators could perform certain AI operations, like matrix multiplications, at unprecedented speeds and with greater energy efficiency than electronic processors.

* **High-Performance Computing (HPC):** Supercomputers could see a significant boost in performance by replacing electrical data buses with optical ones, overcoming bottlenecks that currently limit the flow of data between processing units.

* **Telecommunications:** While fiber optics already use light for long-distance communication, integrating photonic components directly into network equipment can further enhance speed and capacity.

This isn't just about faster calculations; it's about enabling entirely new capabilities. Imagine AI models trained in minutes instead of days, or scientific simulations running orders of magnitude faster. The implications are profound, similar to how quantum computing promises to revolutionize certain problem sets. If you're curious about other computational paradigms, you might find our blog on [Black Holes: Nature's Ultimate Quantum Computers?](/blogs/black-holes-natures-ultimate-quantum-computers-4410) fascinating.

### The Road Ahead: Challenges to Overcome

While the advantages of photonic computing are compelling, the path to widespread adoption is not without its hurdles.

1. **Integration Complexity:** Combining optical and electrical components on a single chip or within a system is incredibly challenging. Electronic chips are mature, dense, and relatively easy to manufacture. Optical components, while advanced, are still catching up in terms of integration density and cost-effectiveness.

2. **Light-Matter Interaction:** Efficiently converting electrical signals to optical signals and back (electro-optic and opto-electric conversion) is crucial. These conversions can introduce latency and energy loss, somewhat diminishing the photonic advantage.

3. **Fabrication Challenges:** Producing highly precise optical waveguides and components at mass-production scale requires specialized techniques. The costs associated with R&D and manufacturing are still high compared to established silicon processes.

4. **Non-Linear Optics:** While photons generally don't interact, creating complex logical gates often requires non-linear optical effects, which can be difficult to control and scale.

Despite these challenges, research is advancing rapidly. Companies like Intel, IBM, and numerous startups are heavily investing in silicon photonics, recognizing its inevitable role in the future of computing. They're making strides in integrating lasers, modulators, and detectors directly onto silicon wafers. For a deeper dive into the challenges and advancements, the [Wikipedia article on Optical Computers](https://en.wikipedia.org/wiki/Optical_computer) offers a comprehensive overview.

### Beyond Silicon: A Glimpse of the Future

I believe that the transition from purely electronic to hybrid opto-electronic, and eventually, perhaps, to purely photonic computing, is not a matter of "if," but "when." The physical limitations of silicon are a hard wall, and light offers a clear path through it.

We're moving towards an era where data volumes are astronomical, and the demand for instantaneous processing and transfer is insatiable. Technologies like artificial intelligence, virtual reality, and complex scientific simulations are pushing the boundaries of what current computing architectures can deliver. Photonic computing promises not just incremental improvements, but potentially exponential leaps in performance and efficiency.

Imagine a future where your devices run cooler, last longer on a charge, and process information at speeds that currently seem unimaginable. That future is being built, photon by photon, in labs and foundries around the world. It’s a testament to human ingenuity, always seeking to overcome limits, whether by exploring new materials like those discussed in our article on [Diamond Chips: Computing Beyond Silicon's Limits?](/blogs/diamond-chips-computing-beyond-silicons-limits-5660) or entirely new paradigms.

The journey to fully realize the promise of photonic computing is still ongoing, but the light at the end of the tunnel – or rather, the light *within* the circuits – is getting brighter every day. It’s an exciting time to be witnessing the dawn of a new era in computational technology.

Frequently Asked Questions

Silicon chips face limitations such as increasing heat generation, high power consumption, and physical size constraints as transistors are miniaturized. Photonic computing aims to overcome these by using light, which generates less heat, consumes less power, and can carry more data simultaneously.

In a photonic computer, data is transmitted using pulses of light (photons) through optical waveguides. These waveguides are microscopic channels on a chip, acting like 'light wires,' moving data much faster and with less resistance than electrons in traditional circuits.

Currently, photonic computing primarily focuses on opto-electronic hybrid systems. This means it integrates photonic components for high-speed data transfer and specific computational tasks with traditional electronic components, which are still very efficient for complex processing and memory.

Major challenges include the complexity of integrating optical and electrical components on a single chip, efficient conversion between electrical and optical signals, high manufacturing costs, and scaling the technology for mass production. Research is ongoing to overcome these hurdles.

Industries that require immense processing power, high bandwidth, and energy efficiency will benefit significantly. This includes data centers, artificial intelligence and machine learning, high-performance computing (supercomputers), and advanced telecommunications.

Verified Expert

Alex Rivers

A professional researcher since age twelve, I delve into mysteries and ignite curiosity by presenting an array of compelling possibilities. I will heighten your curiosity, but by the end, you will possess profound knowledge.

Leave a Reply

Comments (0)

No approved comments yet. Be the first to share your thoughts!

Leave a Reply

Comments (0)