I remember the first time I saw a truly autonomous vehicle prototype navigating a complex city street. It wasn’t a sleek concept car on a track; it was a seemingly ordinary vehicle, yet it moved with an uncanny precision, anticipating turns, yielding to pedestrians, and reacting to traffic lights, all without a human touch on the wheel. My mind immediately jumped to one question: *how?* How could a machine perceive the chaotic, ever-changing tapestry of a road environment and make split-second decisions as competently—or perhaps even more competently—than a human driver? This isn't just about programming; it's about giving a machine "eyes" and a "brain" that can understand the world.

For years, the promise of self-driving cars has captivated our collective imagination. From science fiction tales to today's burgeoning prototypes, the idea of autonomous vehicles navigating our roads has shifted from fantasy to a tangible, rapidly evolving reality. But to truly appreciate this leap in technology, we need to peel back the layers and understand the complex sensory systems that allow these vehicles to "see" and "think." It’s a process far more intricate than simply slapping a camera on a car.

### Beyond Human Vision: The Multi-Sensory Approach

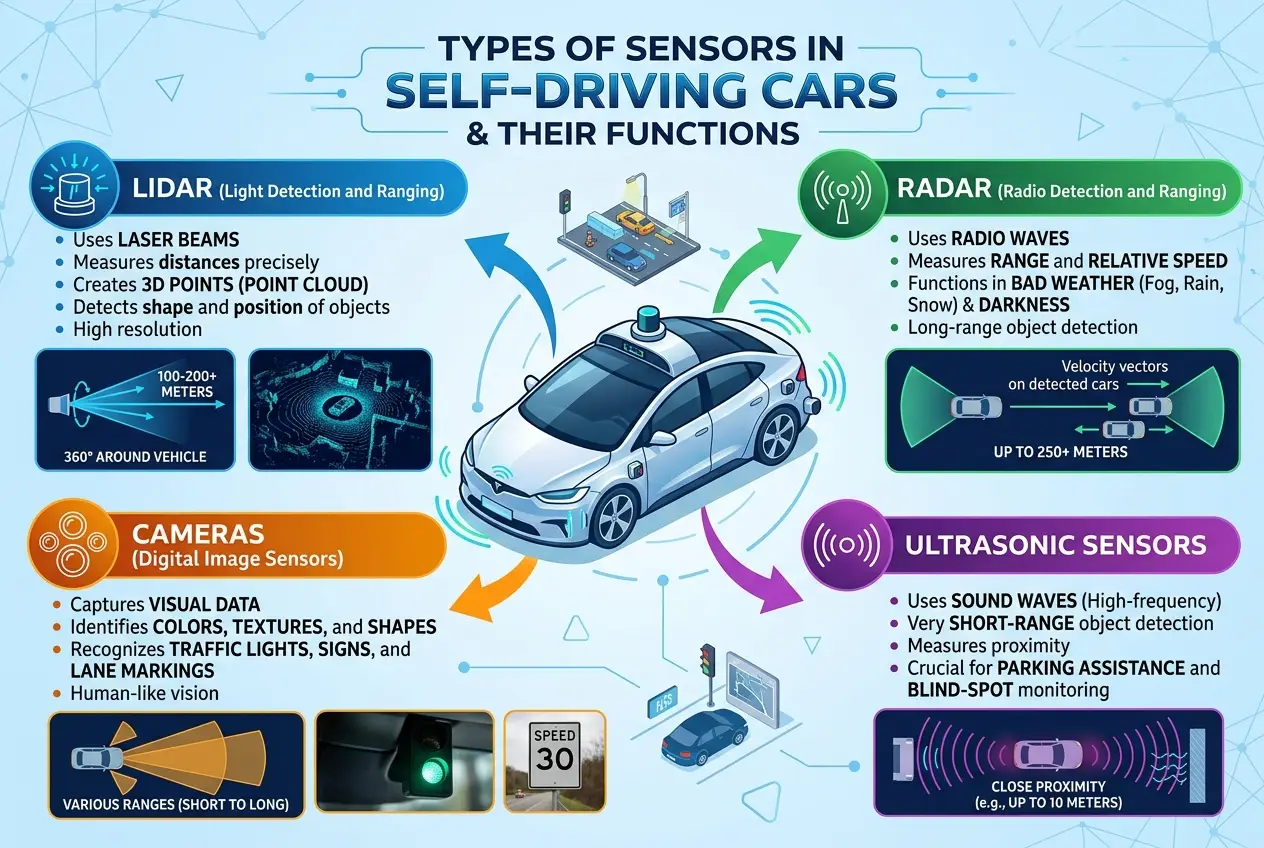

When we drive, our eyes are our primary tools, backed by our brains' incredible ability to process visual information, recognize patterns, and anticipate actions. We see colors, shapes, and movements, infer intentions, and understand context. Self-driving cars, however, don't rely on a single pair of "eyes." Instead, they employ a sophisticated array of sensors, each providing a unique piece of the environmental puzzle. This multi-sensensory approach is crucial because each sensor type has its strengths and weaknesses, and by combining their data, the car builds a robust, comprehensive, and redundant understanding of its surroundings.

Think of it like having multiple senses working in concert: sight, hearing, touch. A human might struggle to see clearly in heavy fog, but a car equipped with the right sensors can still "see" through it. This redundancy is a cornerstone of autonomous safety.

#### The Digital Eyes: Cameras

At the forefront of a self-driving car's perception system are **cameras**. These are perhaps the most intuitive sensors, as they mirror human vision. High-resolution cameras capture vast amounts of visual data, allowing the car’s onboard computer to:

* **Detect Lanes:** Recognize lane markings, road edges, and barriers.

* **Identify Traffic Lights and Signs:** Read and interpret regulatory signs (stop, yield) and traffic signals.

* **Spot Objects:** Distinguish between pedestrians, cyclists, other vehicles, and static obstacles.

* **Track Movement:** Monitor the speed and direction of moving objects.

* **Recognize Colors and Textures:** Crucial for understanding diverse road conditions and differentiating objects.

However, cameras, much like human eyes, are susceptible to environmental challenges. They struggle in low light, heavy rain, dense fog, or blinding sunlight. This is where other sensors come into play.

#### The Invisible Rays: LiDAR

**LiDAR** (Light Detection and Ranging) is a crucial technology that provides a three-dimensional map of the environment. It works by emitting pulsed laser light and measuring the time it takes for the light to return to the sensor. This creates a detailed "point cloud" that represents the surrounding objects and terrain. The advantages of LiDAR are significant:

* **Precise Depth Perception:** Creates extremely accurate 3D models of the world, essential for understanding distances and object shapes.

* **Robust in Low Light:** Unlike cameras, LiDAR is largely unaffected by lighting conditions.

* **High Resolution:** Can detect small obstacles and differentiate objects with high fidelity.

While powerful, LiDAR can be expensive and its performance can degrade in extreme weather like heavy snow or rain, which can scatter the laser beams. You can learn more about how laser technology underpins many modern innovations on Wikipedia's page about [Lidar](https://en.wikipedia.org/wiki/Lidar).

#### The Unseen Waves: Radar

**Radar** (Radio Detection and Ranging) systems use radio waves to detect objects and measure their speed and distance. Unlike LiDAR and cameras, radar excels in adverse weather conditions. Radio waves can penetrate rain, fog, and even light snow, making radar an indispensable component for all-weather autonomy. Its key functions include:

* **Long-Range Detection:** Can spot objects hundreds of meters away, providing early warning for potential hazards.

* **Speed Measurement:** Accurately determines the velocity of other vehicles and obstacles using the Doppler effect.

* **Weather Resilience:** Maintains performance in conditions that blind other sensors.

Radar typically provides less detailed spatial information than LiDAR or cameras, often presenting objects as simpler "blobs" rather than intricate shapes. For deeper insights into its principles, the [Radar](https://en.wikipedia.org/wiki/Radar) article on Wikipedia provides a comprehensive overview.

#### The Short-Range Scouts: Ultrasonic Sensors

For close-range detection, especially during parking or navigating tight spaces, **ultrasonic sensors** are deployed. These emit high-frequency sound waves and measure the time it takes for the echo to return, much like bats use echolocation. They are highly effective for detecting obstacles immediately around the vehicle and are commonly used in parking assist systems.

### Sensor Fusion: The Car's Brain at Work

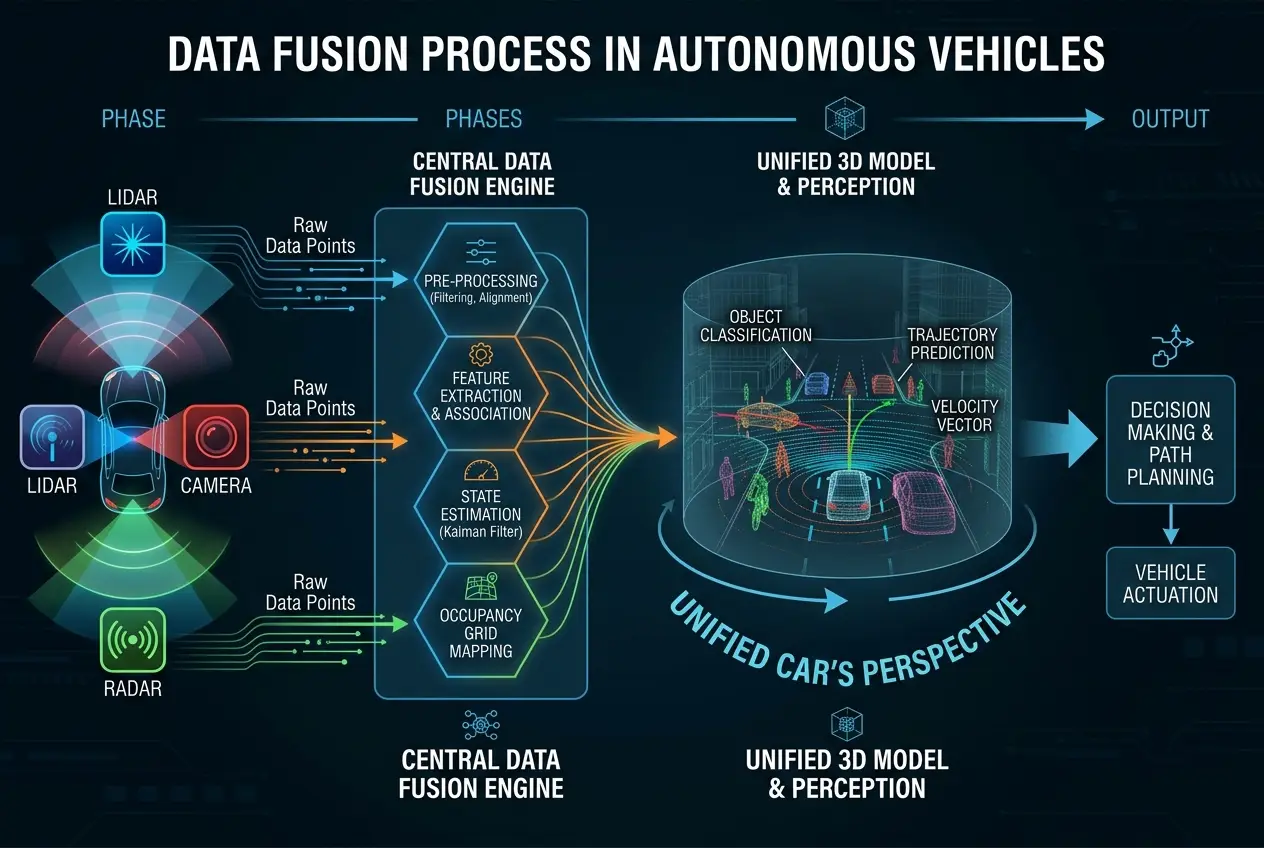

The real magic happens when all this raw data from diverse sensors is combined and processed—a technique known as **sensor fusion**. This is where the self-driving car’s "brain," powered by sophisticated AI and machine learning algorithms, comes into play.

Imagine a human trying to drive while simultaneously looking at separate feeds from a camera, a depth map, and a radar screen. It would be impossible. The car's computer, however, merges these different data streams into a single, coherent, and highly reliable representation of the environment.

#### How Sensor Fusion Works:

1. **Data Collection:** Each sensor continuously feeds data into the central processing unit.

2. **Data Alignment:** The system aligns the data from different sensors in space and time. A camera image needs to correspond to the LiDAR point cloud and radar detections for the same moment and location.

3. **Object Detection and Tracking:** Algorithms identify objects (other cars, pedestrians, traffic cones) and track their positions, velocities, and potential trajectories.

4. **Environmental Modeling:** A dynamic 3D model of the vehicle's surroundings is continuously built and updated. This model includes static elements (roads, buildings) and dynamic elements (moving vehicles, pedestrians).

5. **Redundancy and Validation:** If a camera briefly loses sight of a pedestrian due to glare, LiDAR or radar can confirm their presence and location. This cross-validation significantly increases the system's robustness and safety.

This fused perception allows the car to not only "see" objects but to understand their context. For instance, it can differentiate between a parked car and a car moving in traffic, or a pedestrian standing on the sidewalk versus one stepping into the crosswalk. The continuous refinement of these perception systems is a major factor in improving autonomous driving capabilities, as explored in blogs about how AI can achieve more human-like understanding, such as [Can AI Decipher Gravitational Waves' Secret Language?](https://www.curiositydiaries.com/blogs/can-ai-decipher-gravitational-waves-secret-language-8845).

### The Cognitive Leap: Prediction and Planning

Once the vehicle has a clear understanding of its environment, the next crucial step is **prediction and planning**. This is where the car truly starts to "think" and make decisions. It's not enough to simply see what's there; the car must anticipate what *will* happen.

* **Behavioral Prediction:** AI models analyze the movements of other road users to predict their likely future actions. Will that pedestrian cross the street? Is the car in the next lane about to change lanes?

* **Path Planning:** Based on predictions and the desired destination, the system calculates the optimal path, considering factors like speed limits, traffic conditions, road geometry, and safety regulations.

* **Decision Making:** This involves choosing actions: accelerating, braking, turning, changing lanes, or stopping. These decisions are made in real-time, often hundreds of times per second.

The advanced AI behind this decision-making process is constantly learning and improving through vast datasets of driving scenarios, simulations, and real-world experience. The development of sophisticated AI models that mimic complex thought processes is an exciting frontier, touched upon in discussions about whether advanced computing can create genuine intelligence, like in [Can Brain-Like Chips Create True AI?](https://www.curiositydiaries.com/blogs/can-brain-like-chips-create-true-ai-6876).

### Maps and Localization: Knowing Where You Are

Another critical component of autonomous vision is **high-definition (HD) mapping** and precise **localization**. Self-driving cars don't just use standard GPS; they rely on incredibly detailed maps that include lane markings, traffic signs, road geometry, pedestrian crossings, and even the height of curbs.

These HD maps provide a prior understanding of the environment, giving the car a contextual framework. The car then uses its sensors to localize itself on this map with centimeter-level accuracy, constantly comparing its real-time sensor data with the map data. This helps the car:

* **Navigate Complex Intersections:** Understand the layout before it even "sees" it.

* **Plan Routes Precisely:** Optimize maneuvers based on detailed road information.

* **Enhance Sensor Data:** Use map data to fill in gaps or ambiguities in real-time sensor readings.

### The Human-Machine Divide: Are They Really "Seeing"?

So, do self-driving cars "see" like humans? The truth is, not really in the same intuitive, conscious way. Humans perceive the world through a complex interplay of sensory input, emotion, memory, and cognitive biases. We infer, anticipate, and react based on a lifetime of experience. A quick glance might tell us a child is about to chase a ball into the street because we recognize the context.

Autonomous vehicles, on the other hand, "see" through data. They process billions of data points per second, building a statistical probability model of the world. Their "understanding" is computational, driven by algorithms and machine learning, not consciousness or intuition. They excel at precision, consistency, and tireless vigilance in a way humans cannot. However, they can struggle with truly novel situations, ambiguous social cues, or ethical dilemmas that humans navigate with relative ease.

The ongoing research aims to bridge this gap, with AI developing capabilities for more nuanced interpretation of situations and even a form of "digital empathy," as explored in blogs like [Can AI Truly Feel? Decoding Digital Empathy](https://www.curiositydiaries.com/blogs/can-ai-truly-feel-decoding-digital-empathy-8008).

### Conclusion: The Future is in the Fusion

The journey towards truly autonomous vehicles is a testament to human ingenuity in fusing diverse technologies. By combining the strengths of cameras, LiDAR, radar, and ultrasonic sensors, and processing their data with advanced AI, self-driving cars create a perception of the world that is, in many ways, superior to human vision—especially in its consistency and 360-degree awareness.

While they may not "see" with consciousness or intuition like we do, their ability to meticulously map, understand, and predict the road environment is transforming transportation. The continuous evolution of these "digital eyes" and the "brains" that interpret their input holds the key to a safer, more efficient, and potentially revolutionary future of mobility. The next time you see a self-driving car navigate effortlessly, remember the silent symphony of sensors and algorithms working tirelessly beneath its surface, creating a reality only machines can truly perceive.

Frequently Asked Questions

Self-driving cars are designed with redundancy. If one sensor (like a camera) fails, other sensors (like LiDAR or radar) can compensate, ensuring the system still has enough data to operate safely, often prompting the vehicle to either pull over or request human intervention.

Unfamiliar environments, like newly started construction zones, are challenging. Cars rely on real-time updates to their HD maps, data from other vehicles (fleet learning), and their robust sensor fusion to detect and navigate unexpected obstacles. In highly complex, unmapped scenarios, they might request human oversight or revert to a safe, cautious mode.

Yes, like any connected technology, self-driving cars are susceptible to cyberattacks. Manufacturers implement multi-layered cybersecurity measures to protect sensors and the central processing unit from hacking, spoofing, or jamming attempts that could compromise their perception or control systems.

Differentiating real objects from reflections or shadows is a complex task. Sensor fusion helps; a reflection might show up in a camera but not in LiDAR or radar. Advanced AI and machine learning algorithms are trained on vast datasets to recognize patterns and contextual cues, improving their ability to distinguish actual obstacles from visual illusions.

5G technology offers ultra-low latency and high bandwidth, which can significantly enhance a self-driving car's perception by enabling real-time communication with other vehicles (V2V), infrastructure (V2I), and cloud-based AI. This allows cars to share sensor data, map updates, and traffic information almost instantaneously, extending their 'vision' beyond their immediate physical sensors.

Verified Expert

Alex Rivers

A professional researcher since age twelve, I delve into mysteries and ignite curiosity by presenting an array of compelling possibilities. I will heighten your curiosity, but by the end, you will possess profound knowledge.

Leave a Reply

Comments (0)

No approved comments yet. Be the first to share your thoughts!

Leave a Reply

Comments (0)