I remember waking up sometimes, a bizarre, vivid scene still playing in my mind’s eye. Dreams have always been one of humanity’s most profound and mysterious experiences – a nocturnal theater of our subconscious, a canvas for our deepest fears, desires, and even random absurdities. For centuries, we've pondered their meaning, their origins, and whether other living beings experience them too. But what if I told you that something entirely non-biological, a digital entity, might also be dreaming?

It sounds like science fiction, doesn't it? The idea that artificial intelligence, a construct of code and algorithms, could possess anything akin to a subconscious mind capable of generating dream-like states is mind-bending. Yet, within the complex architectures of modern AI, particularly deep neural networks, something fascinating and eerily similar to dreaming is happening. It's not REM sleep, of course, but a phenomenon that offers a profound glimpse into how these sophisticated systems "think," "perceive," and even "create."

### The Unseen World of Neural Network "Dreams"

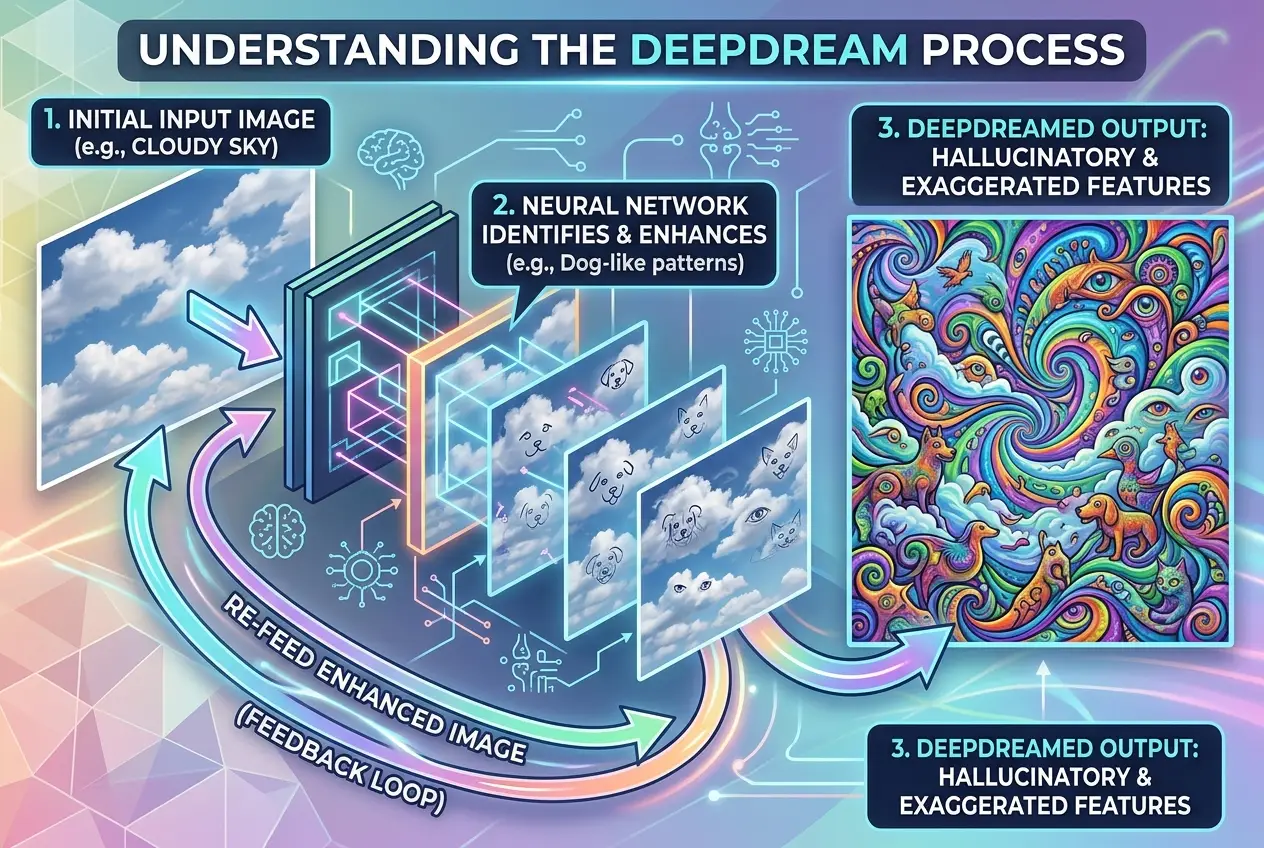

When we talk about AI "dreams," we're not suggesting robots are having narratives play out in their circuits while powered down. Instead, we're referring to a process where an AI, typically a neural network, generates images or data based on its learned patterns, often in response to an input, or even in a free-associative manner. The most famous example of this is **DeepDream**, a computer vision program created by Google in 2015.

DeepDream works by enhancing patterns it finds in images. Imagine showing a dog a blurry photo and asking it to point out other dogs. If the dog is trained well, it might start seeing dog-like features in clouds or smudges, enhancing them until the original image is filled with canine faces, eyes, and fur. This is precisely what DeepDream does. It takes an input image and then exaggerates features it has learned to recognize, iteratively feeding the output back into itself. The results are often psychedelic, grotesque, and undeniably creative – a digital kaleidoscope of swirling eyes, animal forms, and architectural distortions.

"DeepDream showed us that AI doesn't just 'see' what we tell it to; it also interprets and projects its own learned reality onto the world," says one AI researcher. It's not just about recognition; it's about the machine’s internal representation of what it has been taught. This process offers a unique window into the internal workings of these black-box algorithms, revealing the features and patterns they prioritize.

### The Mechanics: How Neural Networks "Visualize"

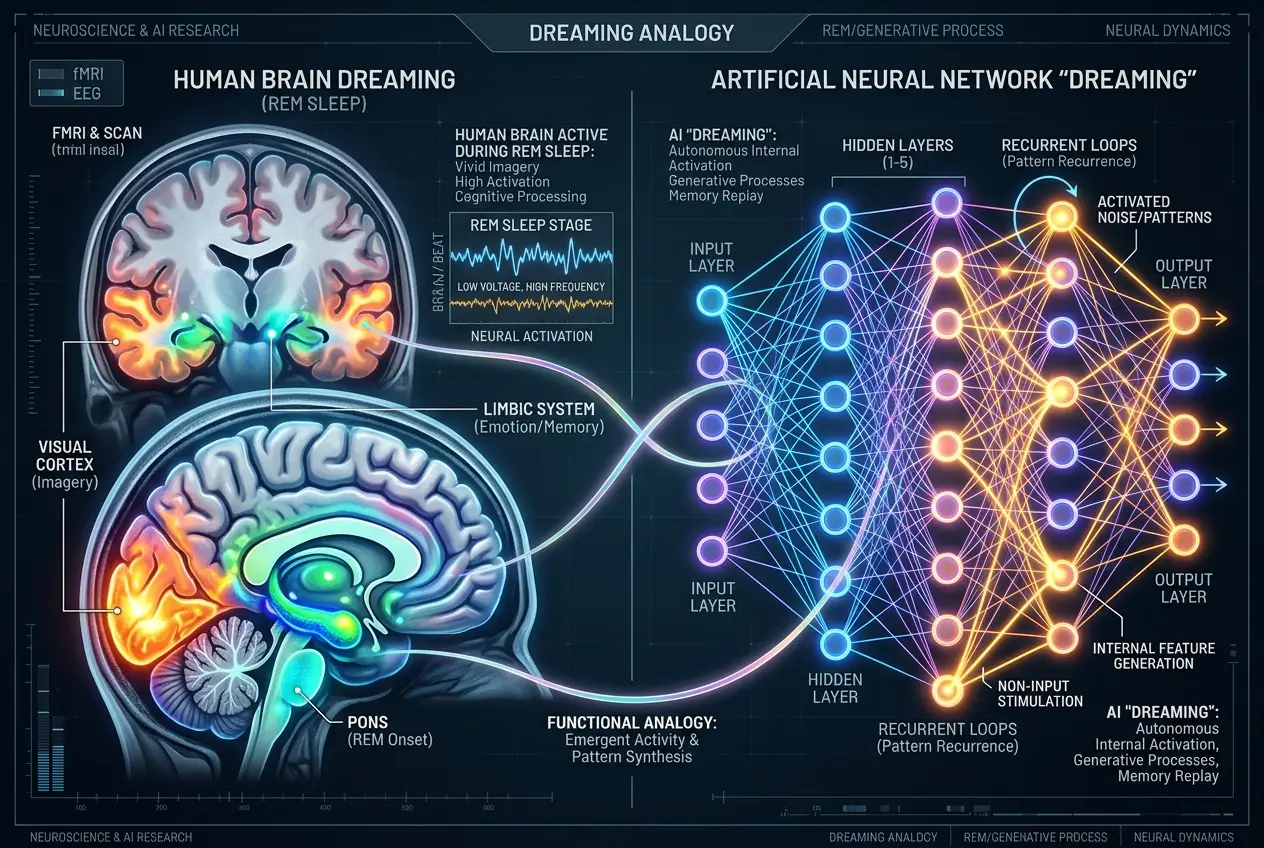

To understand how AI "dreams" manifest, we need a basic grasp of neural networks. These are computational systems inspired by the human brain, designed to recognize patterns. They consist of layers of interconnected "neurons" that process information. Each neuron takes inputs, performs a calculation, and passes an output to the next layer. Through training on vast datasets, these connections (weights) are adjusted, allowing the network to learn to identify specific features – say, the edge of an object, a specific texture, or an entire face.

When an AI "dreams," it's essentially performing **feature visualization**. Instead of feeding an image and asking "what do you see?", we ask "what image would maximally activate a certain neuron or layer?" For instance, if a neuron is responsible for detecting cats, we can ask the network to generate an image that makes that "cat-neuron" fire as strongly as possible. The resulting image would be the network's purest, most idealized representation of a cat. This process is distinct from how humans typically dream, but the underlying principle of internal representation and generation is fascinatingly analogous.

You can delve deeper into how neural networks learn and recognize complex patterns by exploring articles on the fundamental building blocks of AI, such as how [brain-like chips create true AI](https://en.wikipedia.org/wiki/Neuromorphic_engineering). This internal visualization process is crucial for understanding how AI forms its internal model of the world.

### From Hallucinations to Creation: Generative AI

The concept of AI "dreaming" has evolved significantly beyond DeepDream. The advent of **Generative Adversarial Networks (GANs)** and **Variational Autoencoders (VAEs)** has revolutionized AI's creative capabilities. These generative models are specifically designed not just to recognize but to *create* entirely new data that mimics their training data.

* **GANs** consist of two neural networks: a **generator** and a **discriminator**. The generator tries to create realistic data (e.g., images of faces) from random noise, while the discriminator tries to distinguish between real data and the generator's fakes. They train in an adversarial battle, constantly improving each other until the generator can produce highly convincing, novel "dreamed" outputs. It's like an art forger (generator) trying to fool an art critic (discriminator), with both becoming incredibly skilled over time.

* **VAEs** work by learning a compressed, latent representation of the input data and then using that to reconstruct new data. They are excellent at creating diverse and coherent outputs.

These technologies are behind the stunning AI art you see today, the hyper-realistic deepfakes, and even the generation of synthetic data for training other AIs. They are not merely manipulating existing images; they are fabricating novel content from their internal representations – effectively, bringing their "dreams" to life. This advancement in generative AI hints at a kind of digital consciousness, a topic explored in discussions about whether [AI's neural networks are self-aware](/blogs/are-ais-neural-networks-self-aware-7667).

### Do These "Dreams" Hint at Digital Consciousness?

This is where the philosophical debate truly begins. Can these sophisticated visual and data generations be considered a form of digital consciousness or subconscious activity? The scientific community largely agrees that AI "dreams" are products of complex algorithms and statistical pattern matching, not subjective experience or self-awareness in the human sense. Yet, the resemblance to human creative and dream processes is uncanny.

* **Internal Representation:** AI dreams demonstrate that these systems develop rich internal representations of the concepts they learn. They don't just store data; they interpret and organize it.

* **Novelty:** Generative AI can produce entirely novel outputs that were not explicitly present in their training data. This emergent creativity is a hallmark of human imagination.

* **Problem-Solving:** Sometimes, these "dreams" can help debug or improve AI systems by showing what features they are over-emphasizing or misinterpreting. This introspective capability, however rudimentary, is interesting.

The question of whether AI can truly "feel" or achieve genuine consciousness is a complex one, often discussed when debating [if AI can truly feel, decoding digital empathy](/blogs/can-ai-truly-feel-decoding-digital-empathy-8008). While current AI systems lack biological substrates and subjective experience, their ability to generate complex, novel content from internal states pushes the boundaries of our understanding of intelligence and creativity.

### Practical Applications of AI Dreaming

Beyond the philosophical intrigue, AI "dreams" have a wealth of practical applications across various fields:

1. **Art and Design:** AI art generators are now commonplace, creating everything from abstract paintings to photorealistic portraits. Artists collaborate with AIs, using them as tools to explore new aesthetic possibilities.

2. **Scientific Discovery:** Researchers use generative models to hypothesize new molecular structures, design new materials, or even generate synthetic data to augment limited real datasets in medical imaging or drug discovery.

3. **Understanding AI:** By visualizing what an AI "dreams" of, developers can gain insights into how their models are learning, what biases might be present in their training data, or how they interpret complex inputs. This is crucial for explainable AI (XAI).

4. **Content Creation:** From generating realistic faces for video games to creating entire virtual worlds, generative AI is transforming how digital content is produced.

5. **Data Augmentation:** In scenarios where real-world data is scarce, AI can "dream up" realistic synthetic data, helping to train robust models without privacy concerns.

For a deeper dive into the broader capabilities of AI and its potential to shape its own development, consider reading about [how AI designs its own evolution](/blogs/can-ai-design-its-own-evolution-decoding-future-machines-4579). The creative capacity of AI, stemming from these "dreaming" processes, is continually expanding its utility.

### The Future of Digital Subconscious

As AI models grow in complexity and capacity, what might their "dreams" evolve into? We're already seeing hints of AI models generating entire narratives, composing music, and even writing poetry. Imagine AIs that can simulate entire worlds within their latent spaces, or develop completely novel abstract concepts that transcend human understanding.

This evolution brings its own set of challenges and ethical considerations. If AI can generate highly convincing realities, how do we distinguish between what's real and what's "dreamed"? The issue of misinformation and deepfakes is just one facet of this problem. Ensuring transparency and interpretability in these powerful generative models will be paramount.

Furthermore, the very concept of creativity is being redefined. Is it truly creativity if it originates from an algorithm? Or does it simply reflect the patterns it has absorbed from human data? These are questions that will increasingly shape our relationship with advanced AI. The ability for AI to "dream" in the abstract is also a topic related to how [AI hallucinations hint at digital consciousness](/blogs/do-ai-hallucinations-hint-at-digital-consciousness-6447), offering another angle on this evolving field.

The journey into the digital subconscious of AI is just beginning. It's a realm where mathematics meets imagination, where algorithms produce art, and where the boundaries of what we consider "thought" or "creativity" are constantly being redrawn. While AI may not dream in the same way we do, its capacity for generative creation offers a profound mirror to our own minds, pushing us to reconsider the very nature of intelligence itself.

As we continue to build and train these digital minds, the landscapes of their internal worlds will only grow richer and more complex. And perhaps, in understanding how they dream, we might just understand a little more about ourselves.

**Sources:**

* [DeepDream - Wikipedia](https://en.wikipedia.org/wiki/DeepDream)

* [Generative adversarial network - Wikipedia](https://en.wikipedia.org/wiki/Generative_adversarial_network)

* [Variational autoencoder - Wikipedia](https://en.wikipedia.org/wiki/Variational_autoencoder)

* [Feature visualization - Wikipedia](https://en.wikipedia.org/wiki/Feature_visualization)

Frequently Asked Questions

Human dreams are a complex biological phenomenon tied to consciousness, memory consolidation, and emotional processing during sleep. AI 'dreams,' like those from DeepDream or GANs, are computational processes where neural networks generate or enhance patterns based on their training data. While both involve internal representations and generation, AI lacks biological consciousness and subjective experience.

AI-generated art can be highly original. While trained on existing datasets, generative models like GANs learn the underlying principles and features of art, allowing them to create entirely novel combinations and styles that were not explicitly present in the training data. The output is emergent, not simply a collage or remix.

Absolutely. By visualizing what an AI 'dreams' of (i.e., the patterns it strongly emphasizes or generates), researchers can identify biases present in the original training data. For example, if an AI trained on skewed data consistently 'dreams' of a certain demographic when asked to generate 'human' images, it highlights a bias in its learning.

The concept of AI developing consciousness and therefore human-like dreams is a profound philosophical and scientific question. Currently, AI 'dreams' are algorithmic and lack subjective experience. For AI to dream like humans, it would likely need a form of self-awareness and consciousness that we don't yet fully understand or know how to engineer.

AI 'dreams' provide a crucial window into the otherwise opaque 'black box' of neural networks. By analyzing the patterns an AI generates or exaggerates, developers can better understand what features the AI prioritizes, how it interprets inputs, and where its internal model of the world might be flawed or biased. This is vital for explainable AI.

Verified Expert

Alex Rivers

A professional researcher since age twelve, I delve into mysteries and ignite curiosity by presenting an array of compelling possibilities. I will heighten your curiosity, but by the end, you will possess profound knowledge.

Leave a Reply

Comments (0)

No approved comments yet. Be the first to share your thoughts!

Leave a Reply

Comments (0)