I still remember the eerie feeling the first time I saw a deepfake that was almost indistinguishable from reality. It wasn't just a simple face swap; it was a politician delivering a speech they never gave, their voice, mannerisms, and facial expressions perfectly replicated. My initial reaction was fascination, but it quickly turned to a chilling thought: **what if this technology could do more than just deceive our eyes and ears? What if it could fundamentally alter what we *remember* as truth?** This isn't science fiction anymore. We’re standing at the precipice of a new frontier where artificial intelligence isn't just generating convincing fakes, but potentially rewriting our personal and collective histories.

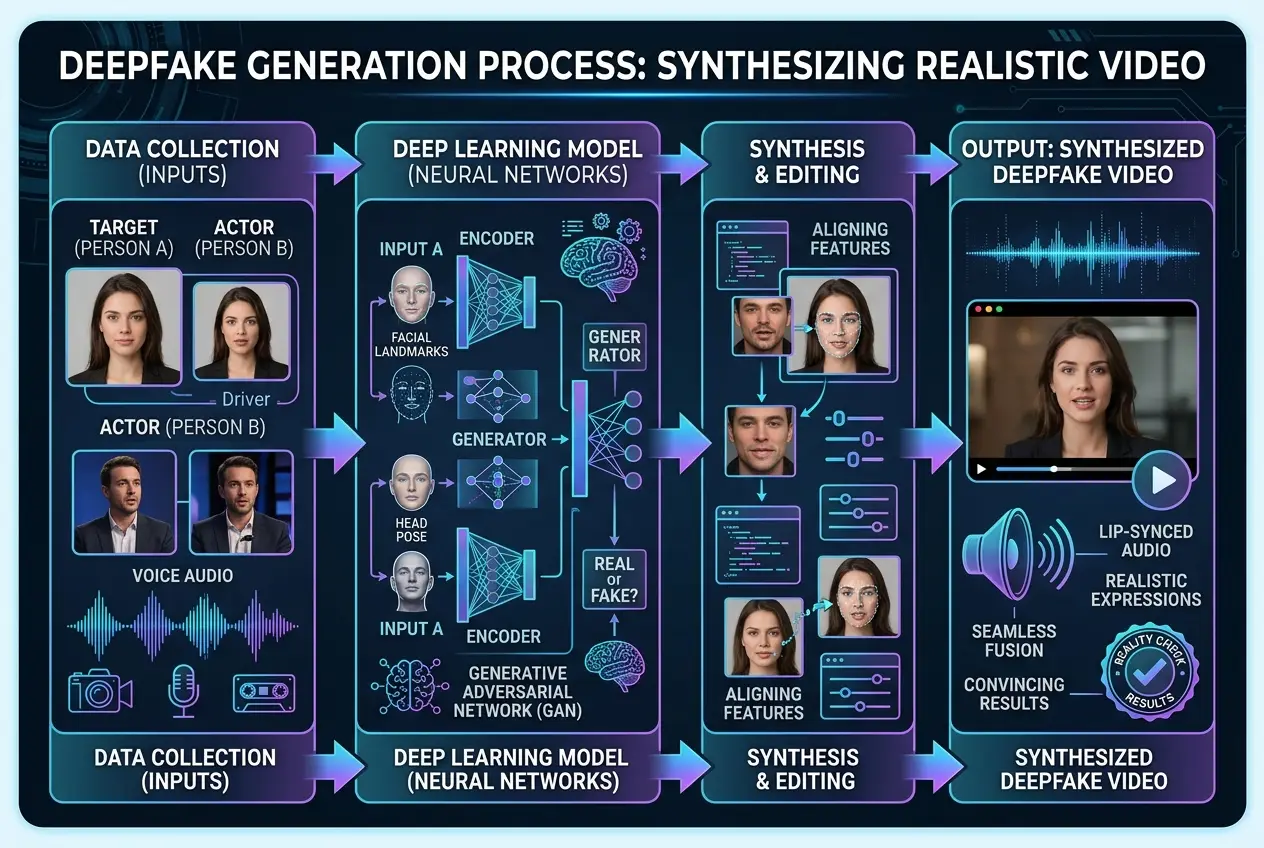

The term "deepfake" has become commonplace, often associated with celebrity hoaxes or political propaganda. However, the underlying technology, rooted in deep learning and neural networks, is evolving at an exponential rate. Initially leveraging Generative Adversarial Networks (GANs), deepfakes have moved beyond simple video manipulation to encompass voice synthesis, realistic animation, and even full-body digital puppetry. The result is content so convincing that distinguishing it from genuine footage often requires specialized analytical tools, or sometimes, just a leap of faith from a skeptical observer.

### The Unsettling Rise of Deepfakes

For years, human memory was often considered a relatively stable, though not flawless, record of our experiences. We might forget details or misremember certain events, but the core narrative usually remained intact. Deepfakes challenge this fundamental assumption by creating entirely synthetic "memories" that can be presented as incontrovertible proof. Imagine a video of you saying something inflammatory you never uttered, or attending an event you never went to. The immediate impact is obvious: reputational damage, legal battles, public outcry. But the long-term, insidious effect is what concerns me most. **Could repeatedly encountering a fabricated "memory" of ourselves or others eventually overwrite our true recollection?**

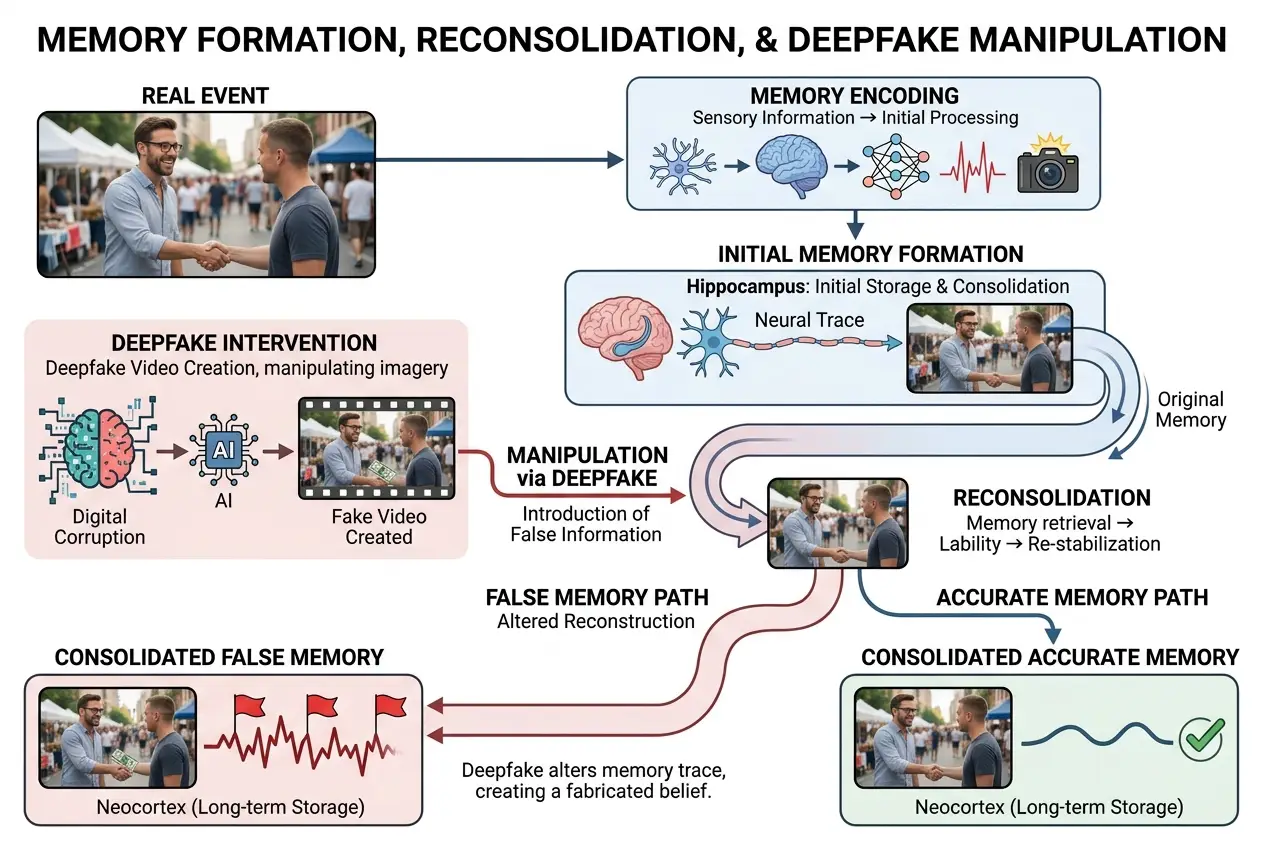

This question isn't just speculative; it touches upon the very nature of human memory itself. Unlike a computer hard drive that stores data in fixed locations, our brains don't record events like a perfect camera. Instead, memory is a reconstructive process, constantly being reassembled and updated each time we recall something. This malleability, while essential for learning and adapting, also makes us vulnerable to suggestion and external influence.

### The Human Brain: A Malleable Recorder

Our memories are not static files. Each time you access a memory, it undergoes a process called **reconsolidation**. During this phase, the memory becomes temporarily vulnerable to alteration before being re-stored. This is how therapy can help individuals process traumatic events, or how new information can update an existing narrative. This incredible flexibility, however, is a double-edged sword when confronted with highly persuasive, digitally altered content.

Think about the misinformation effect in psychology. Studies have shown that exposing people to misleading information after an event can distort their recollection of the original event. For instance, if you witness a car accident and later hear someone describe the cars "smashing" into each other, you might vividly recall a more violent collision than what actually occurred, even if you initially remembered a "hit." Deepfakes take this a step further by providing a seemingly undeniable visual and auditory "record" of an event that never happened. For more on how our brains can be tricked, consider exploring how virtual reality can manipulate perception in our blog, "How Does VR Trick Your Brain? Unpacking Reality's Illusion" at /blogs/how-does-vr-trick-your-brain-unpacking-realitys-illusion-3236.

### How Deepfakes Exploit Memory's Weaknesses

Deepfakes don't just create false information; they create **false evidence**. When we see a video, we inherently trust its authenticity. Our brains are wired to prioritize visual and auditory input as primary sources of truth. This is where deepfakes gain their power:

1. **Source Credibility:** A video featuring a familiar face speaking convincingly carries immense weight. If that face is *our own*, the cognitive dissonance can be profoundly unsettling, potentially forcing a re-evaluation of what we *thought* happened.

2. **Repetitive Exposure:** The more frequently we encounter a piece of information, even if it's false, the more likely we are to believe it and integrate it into our memory framework. Social media algorithms, designed to amplify engaging content, inadvertently create echo chambers where deepfakes can spread like wildfire, reinforcing false narratives.

3. **Emotional Impact:** Deepfakes can be designed to evoke strong emotional responses – anger, fear, joy, shame. Emotions play a significant role in memory formation and recall. A deepfake that triggers a powerful emotion can become deeply etched in our minds, even if logically, we know it's untrue. Wikipedia's entry on the misinformation effect elaborates on how these cognitive processes work: [https://en.wikipedia.org/wiki/Misinformation_effect](https://en.wikipedia.org/wiki/Misinformation_effect).

### The Cognitive Science of Fabricated Realities

The psychological impact of deepfakes on memory extends beyond simple misremembering. It can lead to the formation of **false memories**, where individuals genuinely believe they experienced something that never occurred. Renowned memory researcher Elizabeth Loftus's work has extensively demonstrated how easy it is to plant false memories through suggestive questioning or exposure to misleading information. Deepfakes offer a highly potent, automated tool for such "memory implantation."

Imagine a deepfake video of you committing a minor infraction, something easily plausible. After seeing it, even if you initially deny it, repeated exposure, coupled with social pressure or even self-doubt, could gradually lead you to internalize that event as a genuine memory. The brain, seeking coherence, might construct a narrative around it, filling in details to make sense of the "experience." The line between digital anomaly and personal history blurs. For a broader perspective on how digital content can shape our perception of reality, check out our blog on "Digital Anomalies: Glimpses of a Hidden Reality" at /blogs/digital-anomalies-glimpses-of-a-hidden-reality-1620.

### Beyond Visuals: The Multisensory Assault

Modern deepfake technology isn't limited to just visuals. **Voice synthesis** has become incredibly advanced, capable of cloning a person's voice with startling accuracy from mere seconds of audio. This means a deepfake can not only show you saying something, but also make you *hear* yourself saying it. This multisensory immersion further amplifies the persuasive power of fabricated content. Our brains integrate sensory information to form a holistic perception of reality. When both sight and sound corroborate a false event, the cognitive system struggles to reject it, making memory manipulation even more effective.

Moreover, the psychological phenomenon of **source amnesia** plays a critical role. We often remember information, but forget where we learned it. You might remember seeing a video of a particular event, but over time, you forget that it was a deepfake shared on a dubious social media page. The "memory" persists, detached from its fabricated origin, becoming indistinguishable from genuine recollection. Wikipedia's article on false memory provides further context: [https://en.wikipedia.org/wiki/False_memory](https://en.wikipedia.org/wiki/False_memory).

### The Ripple Effect: Societal and Ethical Dilemmas

The implications of AI deepfakes on our memories extend far beyond individual experiences. On a societal level, the ability to manipulate memories could:

* **Erode Trust:** If we can no longer trust what we see and hear, especially from traditional media or even personal archives, the foundations of societal trust will crumble.

* **Rewrite History:** Deepfakes could be used to fabricate historical events, altering public perception of past conflicts, political decisions, or social movements.

* **Legal Challenges:** Court cases relying on video or audio evidence could become hopelessly complicated, requiring extensive forensic analysis for every piece of digital media.

* **Political Instability:** Misinformation campaigns powered by deepfakes could sway elections, incite civil unrest, and destabilize nations.

* **Personal Identity Crisis:** Imagine questioning your own past, unsure if your memories are truly yours or implanted by digital trickery. This can have profound mental health consequences.

As AI continues to advance, the distinction between what is real and what is synthetically generated will become increasingly blurred. We are entering an era where our very perception of reality, and thus our memories, could be significantly shaped by artificial intelligence. This raises profound ethical questions about the responsibility of AI developers and the need for robust educational initiatives to foster digital literacy. For more on the philosophical implications of a simulated reality, you might find "Is Our Reality a Digital Simulation? Decoding the Universe's Code" enlightening: /blogs/is-our-reality-a-digital-simulation-decoding-the-universes-code-9313.

### Guarding Our Minds in a Deepfake World

So, what can we do to protect our memories and our sense of reality in this evolving landscape?

1. **Critical Thinking:** The most potent defense remains human skepticism. Always question the source, context, and emotional impact of any compelling digital content.

2. **Digital Literacy:** Education is crucial. Understanding how deepfakes are created and the psychological mechanisms they exploit empowers individuals to identify and resist their influence.

3. **Technological Solutions:** Researchers are developing AI-driven detection tools to identify deepfakes. While it's an arms race, these tools offer a vital layer of defense.

4. **Media Verification:** Support and rely on reputable news organizations that employ rigorous fact-checking and verification processes.

5. **Ethical AI Development:** The industry must collectively establish and adhere to ethical guidelines that prioritize transparency and minimize the potential for misuse.

In conclusion, the potential for AI deepfakes to manipulate our memories is not just a theoretical concern; it's a rapidly emerging reality. As I reflect on this, it's clear that the future requires a proactive approach – a blend of advanced technological countermeasures, widespread digital education, and a collective commitment to safeguarding truth. Our ability to discern what is real, to trust our own recollections, and to maintain a shared understanding of history, hinges on our vigilance in this new digital age. The challenge is immense, but so is the human capacity for adaptation and resilience.

Frequently Asked Questions

Deepfakes have reached an astonishing level of realism, capable of replicating facial expressions, body language, and vocal nuances with high fidelity. While perfect replication is still challenging, many deepfakes are convincing enough to fool human perception, especially in low-resolution or short clips.

Legislation regarding deepfakes is still evolving globally. Some regions and countries have enacted laws against non-consensual deepfake pornography or deepfakes intended to defraud or defame. However, the legal landscape is complex and varies significantly, with challenges in identifying perpetrators and proving intent.

Yes, active resistance is possible. Developing strong critical thinking skills, being aware of how deepfakes work, and questioning the source of information are crucial. Practicing media literacy, engaging in fact-checking, and seeking confirmation from multiple reputable sources can help protect against false memory implantation.

Personal beliefs and biases can significantly influence susceptibility. If a deepfake aligns with an individual's existing beliefs or reinforces a narrative they already suspect, they may be more likely to accept it as true, even if contradictory evidence exists. This cognitive confirmation bias makes them more vulnerable to memory manipulation.

Deepfake detection is an ongoing arms race. Researchers are continuously developing more sophisticated AI models that look for subtle inconsistencies, digital fingerprints, and unique characteristics left by deepfake algorithms. However, as generation methods improve, detection methods must also evolve, making it a constant challenge.

Verified Expert

Alex Rivers

A professional researcher since age twelve, I delve into mysteries and ignite curiosity by presenting an array of compelling possibilities. I will heighten your curiosity, but by the end, you will possess profound knowledge.

Leave a Reply

Comments (0)

No approved comments yet. Be the first to share your thoughts!

Leave a Reply

Comments (0)