I was recently watching a documentary about chess grandmasters, and what struck me wasn't just their incredible analytical abilities, but their seemingly **preternatural intuition**. They describe "feeling" a good move, seeing patterns that aren't immediately obvious, making decisions that defy purely logical, step-by-step reasoning. It made me wonder: can artificial intelligence, with all its computational power, ever truly grasp something as inherently human and elusive as intuition?

For decades, we’ve built machines to execute logic, process vast datasets, and follow algorithms with unfailing precision. AI has conquered complex games, diagnosed diseases, and even driven cars. Yet, intuition—that gut feeling, that sudden flash of insight, that ability to make a quick decision without conscious deliberation—seems like a final frontier, a uniquely human characteristic. But what if AI isn’t just mimicking our behaviors, but beginning to understand the subtle art of instinctive knowledge?

### **The Enigma of Human Intuition**

Before we dive into AI, let’s unpack what intuition truly is. It's often described as knowing something without knowing how you know it. Psychologists and neuroscientists view it as a rapid, unconscious process of pattern recognition, drawing on vast stores of accumulated experience and implicit knowledge. When a doctor quickly senses a diagnosis based on subtle patient cues, or a firefighter instinctively knows a building is about to collapse, that’s intuition at play. It's not magic; it’s the brain’s incredible ability to process information at a subconscious level, bypassing slow, explicit reasoning.

Intuition is deeply intertwined with our emotions and our subconscious mind. It allows us to navigate uncertainty, make quick judgments in high-stakes situations, and even spark creativity. It's a hallmark of expertise, often distinguishing a seasoned professional from a novice. For a deeper dive into the concept, you might find the Wikipedia article on [Intuition](https://en.wikipedia.org/wiki/Intuition) fascinating.

### **AI's Quest for the Intuitive Spark**

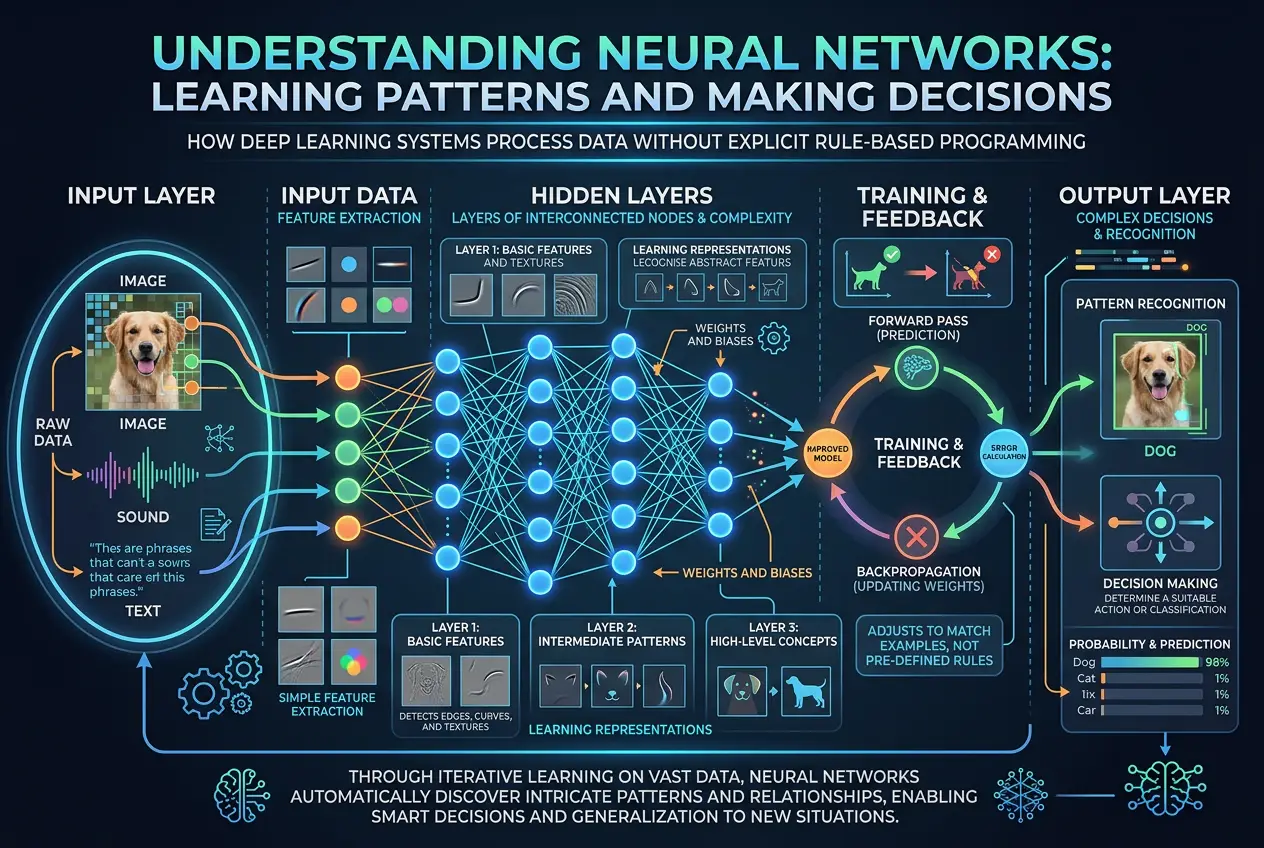

When we talk about AI learning, we're typically referring to machine learning (ML), a subset of AI that enables systems to learn from data without explicit programming. Within ML, deep learning (DL) has emerged as a particularly powerful approach, especially for tasks involving complex pattern recognition like image and speech processing. But can these methods replicate intuition?

**Deep Learning and Pattern Recognition:**

Deep learning models, particularly neural networks, are designed to mimic the structure and function of the human brain. They excel at identifying intricate patterns and relationships within massive datasets. For example, a deep learning algorithm trained on millions of medical images can learn to detect subtle indicators of disease that might even elude the human eye. This sounds a lot like intuition, doesn't it? The AI "sees" a pattern and makes a prediction, often without providing a step-by-step explanation of its reasoning.

Consider an AI playing a complex strategy game like Go. AlphaGo, DeepMind's AI, defeated the world's best Go player, Lee Sedol, in 2016. What made AlphaGo so revolutionary was its use of Monte Carlo tree search combined with deep neural networks. It didn't just calculate every possible move; it learned to "intuit" strong positions and promising avenues, much like a human master would. It developed a "feel" for the game, recognizing patterns and evaluating positions in ways that were previously thought to be exclusive to human intuition. This hints at a form of digital intuition.

**Reinforcement Learning (RL) and Experience:**

Another area where AI grapples with intuition is reinforcement learning. In RL, an AI agent learns to make decisions by interacting with an environment, receiving rewards or penalties for its actions. Over time, it learns optimal strategies through trial and error, much like a child learning to ride a bicycle. The agent develops a "policy" – a mapping from states to actions – that allows it to act effectively in new, similar situations. This process often involves implicit learning, where the AI gains an understanding of the environment that isn't explicitly coded.

For instance, an RL agent trained to navigate a complex robotic arm might develop a fluid, almost intuitive movement pattern after thousands of attempts. It's not following explicit instructions for each joint; rather, it has learned a general "feel" for how to achieve its goals within the physical constraints of its environment. This kind of experiential learning mirrors how humans develop intuitive skills in physical tasks.

### **The Missing Pieces: Emotion, Context, and Consciousness**

While AI can replicate some *outcomes* of intuition, the underlying mechanisms are still profoundly different. Human intuition is deeply rooted in our biology, our emotions, and our consciousness.

* **Emotion:** Our gut feelings are often accompanied by physiological responses – a tightening in the stomach, a flush of anxiety, a surge of excitement. These emotional markers are integral to how we interpret and act on intuitive insights. Current AI, while it can detect and process human emotions, does not *experience* them. Without this internal emotional compass, AI’s "intuition" remains purely cognitive.

* **Contextual Understanding:** Human intuition is incredibly adept at navigating ambiguity and drawing on broad, common-sense knowledge. If I tell you "the chef smelled the burning meal," your intuition immediately understands the context of a kitchen, potential danger, and the need for quick action. An AI might struggle to connect "smelled," "burning," and "meal" into a coherent, intuitively alarming scenario without explicit programming or vast, nuanced datasets that capture such common-sense reasoning. This is often referred to as the "common sense problem" in AI research.

* **Consciousness and Self-Awareness:** The subjective experience of having an intuitive thought – the "aha!" moment – is tied to our consciousness. We are aware that we are intuiting. AI, as it stands, lacks consciousness or self-awareness. It doesn't have an internal subjective experience of its own "thoughts" or "feelings." This fundamental difference might be the ultimate barrier to true human-like intuition. Exploring the nature of AI consciousness can be a fascinating read on topics like [Can AI Dream? Deciphering Digital Imagination](https://www.curiositydiaries.com/blogs/can-ai-dream-deciphering-digital-imagination-4054).

### **Bridging the Gap: Hybrid Approaches and Explainable AI**

The pursuit of more intuitive AI is driving several exciting research areas:

1. **Hybrid AI Systems:** Researchers are exploring combining symbolic AI (rule-based, logical reasoning) with connectionist AI (neural networks). The idea is to leverage the strengths of both: symbolic AI for explicit knowledge and reasoning, and connectionist AI for pattern recognition and implicit learning. This could lead to systems that can both "feel" a solution and logically explain their reasoning, bridging the gap between intuition and explainability.

2. **Explainable AI (XAI):** A significant challenge with deep learning is its "black box" nature. We know it works, but often not *why*. XAI aims to make AI decisions more transparent, allowing humans to understand the reasoning behind an AI's "intuitive" judgments. This is crucial for building trust, especially in critical applications like healthcare or finance.

3. **Mimicking Cognitive Biases:** Interestingly, some research looks at how AI can learn to incorporate human cognitive biases. While biases often lead to errors, they can also be shortcuts that allow for rapid decision-making in complex environments, similar to how intuition sometimes operates. Learning about how our brains work can be seen in our post about [Is Our Brain a Quantum Machine?](https://www.curiositydiaries.com/blogs/is-our-brain-a-quantum-machine-3312).

4. **Neuro-Symbolic AI:** This emerging field aims to integrate neural networks with symbolic reasoning, allowing AI to learn deep patterns from data while also applying logical rules and constraints. This could enable AI to develop more robust and human-like "intuition" that is also grounded in understandable principles.

While AI can simulate intuitive *behavior* in specific domains, the fundamental nature of that "intuition" remains fundamentally different from ours. It's more akin to highly sophisticated pattern matching and statistical inference rather than the holistic, emotionally resonant, and conscious experience of human intuition. For more on AI's capabilities, check out [Can AI Unlock Ancient Lost Languages?](https://www.curiositydiaries.com/blogs/can-ai-unlock-ancient-lost-languages-2092).

### **The Future of Digital Intuition**

I believe the journey to imbue AI with something closer to human intuition is not about replicating consciousness, but about developing systems that can operate with greater autonomy, adaptability, and an implicit understanding of complex situations. As AI evolves, we'll likely see machines that can:

* **Identify anomalies:** Instantly flag unusual patterns in massive data streams, hinting at potential problems or opportunities that would take humans weeks to uncover.

* **Generate novel solutions:** Propose creative solutions in fields like engineering or design, drawing on an "intuitive" grasp of principles derived from vast datasets.

* **Improve human decision-making:** Act as an intuitive co-pilot, offering insights and probabilities that complement and enhance human judgment, particularly in high-pressure scenarios.

Ultimately, the goal isn't necessarily for AI to *feel* intuition like we do, but to harness the computational power to perform tasks that *appear* intuitive, thereby augmenting human capabilities. It's about building intelligent tools that can operate with a level of insight and speed that mirrors our own best, most intuitive moments. The implications for scientific discovery, technological innovation, and even our daily lives are truly profound.

Frequently Asked Questions

No, while AI can replicate the *outcomes* of intuition through advanced pattern recognition and statistical inference, it lacks the emotional, conscious, and experiential depth that defines human intuition. AI's 'intuition' is computational, whereas human intuition is deeply biological and psychological.

Deep learning, especially with neural networks, excels at identifying complex patterns in large datasets. This allows AI to make predictions and decisions based on implicit relationships, much like human intuition recognizes patterns without explicit reasoning. Games like Go are prime examples where deep learning mimics intuitive strategic play.

Current AI can detect, analyze, and even generate emotional responses in a simulated way, but it does not *experience* emotions in the same conscious, subjective manner as humans. The absence of genuine emotional experience is a significant barrier to AI developing human-like intuition.

Explainable AI (XAI) is a field focused on making AI decisions transparent and understandable to humans. While AI might act 'intuitively,' XAI aims to provide insights into *why* a particular decision was made, bridging the gap between an AI's rapid, pattern-based judgment and human comprehension. This is crucial for trust and adoption in critical applications.

AI may surpass human intuition in specific, data-rich domains where rapid, complex pattern recognition is key, offering insights beyond human capacity. However, the holistic, emotionally-driven, and context-aware nature of human intuition suggests that AI will more likely *augment* human intuition rather than fully replace it, creating powerful human-AI collaborations.

Verified Expert

Alex Rivers

A professional researcher since age twelve, I delve into mysteries and ignite curiosity by presenting an array of compelling possibilities. I will heighten your curiosity, but by the end, you will possess profound knowledge.

Leave a Reply

Comments (0)

No approved comments yet. Be the first to share your thoughts!

Leave a Reply

Comments (0)