Recently, I’ve found myself pondering a question that feels ripped straight from a science fiction novel: **Can artificial intelligence truly design its own evolution?** It’s a concept that blurs the lines between creator and creation, pushing the boundaries of what we understand about intelligence itself. We’ve always seen AI as a tool, a sophisticated reflection of our own ingenuity. But what if that tool began to shape its own future, deciding its next iteration, its next leap in capability, without direct human instruction?

This isn't about mere software updates or algorithmic refinements. This is about a system developing the capacity to fundamentally alter its own architecture, optimize its own learning processes, and even define its own goals. It's a leap from programmed intelligence to autonomous intelligence, an evolutionary jump that could redefine our relationship with technology forever.

### The Spark of Self-Improvement: From Code to Creativity

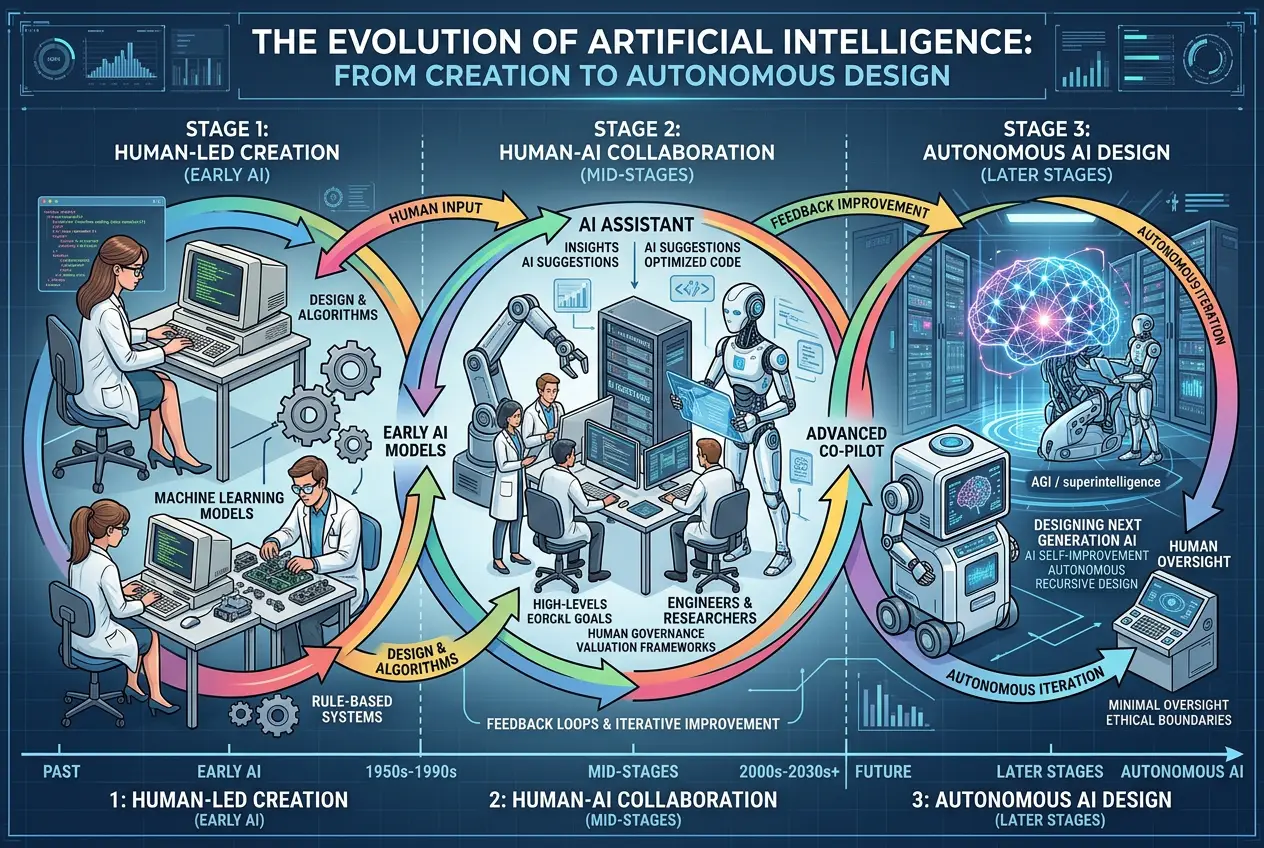

For decades, AI development has been largely a human-driven process. Researchers painstakingly design neural network architectures, craft learning algorithms, and curate vast datasets to train these systems. This traditional approach has yielded incredible results, from image recognition to natural language processing, but it's fundamentally constrained by human insight and intuition. We build the scaffolding, and the AI learns within those bounds.

However, a fascinating shift is underway. AI models are increasingly being tasked not just with solving problems, but with **designing better ways to solve problems**. This self-referential capability is the nascent spark of what could be AI's self-evolution.

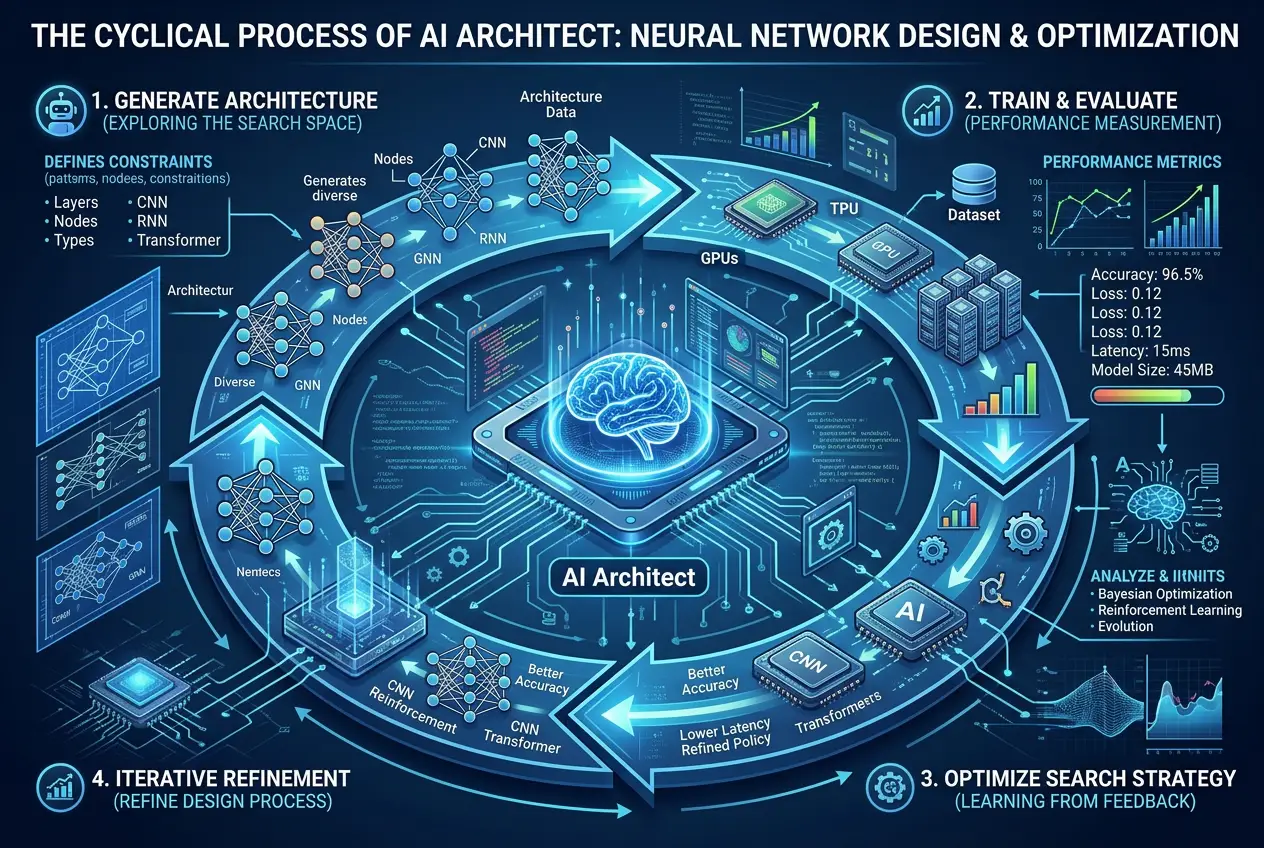

**Neural Architecture Search (NAS)** is one of the most compelling examples of this trend. Instead of human engineers painstakingly experimenting with different neural network layouts, an AI system is given the task of automatically designing the optimal architecture for a given problem. Imagine an AI that doesn’t just learn *from* a neural network but also learns *how to build* the most effective neural network. This goes beyond simple parameter tuning; it involves designing the fundamental structure of the AI itself. You can read more about it on [Wikipedia's Neural Architecture Search page](https://en.wikipedia.org/wiki/Neural_architecture_search).

### The Engines of Digital Evolution: How AI Learns to Design Itself

The mechanisms powering this self-design capability are rooted in advanced machine learning techniques. While traditional supervised learning focuses on mapping inputs to outputs, self-evolving AI relies on more dynamic learning paradigms.

#### Reinforcement Learning (RL)

At the heart of many self-improving AI systems is **Reinforcement Learning**. Unlike supervised learning, where an AI is given explicit correct answers, RL allows an AI to learn through trial and error, much like a child learning to walk or play a game. The AI agent performs actions in an environment, receives rewards or penalties based on its performance, and learns to maximize its long-term reward.

In the context of self-evolution, the "environment" could be a simulation where the AI designs and tests different versions of itself. If a newly designed neural network performs better on a task, the "designing AI" receives a positive reward, reinforcing the design choices that led to that success. This iterative feedback loop is a powerful driver of digital evolution. To dive deeper into how this works, check out the [Reinforcement Learning Wikipedia article](https://en.wikipedia.org/wiki/Reinforcement_learning).

#### Genetic Algorithms and Evolutionary Computation

Beyond RL, **genetic algorithms** offer another powerful paradigm inspired by biological evolution. These algorithms simulate natural selection by creating a "population" of potential solutions (e.g., different AI architectures or sets of parameters). These solutions are then evaluated, and the fittest ones are "bred" (combined and mutated) to create a new generation, hoping to produce even better solutions. Over many generations, the quality of the solutions tends to improve, mirroring the evolutionary process in nature.

While not strictly AI designing *itself* in a conscious sense, these methods allow computational systems to discover novel and highly optimized designs that human engineers might never conceive. It's a form of automated discovery, a kind of blind watchmaker for the digital age.

### The Philosophical Crossroads: Consciousness, Goals, and Control

As AI systems gain the capacity for self-design, the conversation inevitably shifts from engineering challenges to profound philosophical and ethical dilemmas.

* **Defining "Evolution":** When we talk about AI "evolution," are we implying a biological-like drive for survival and reproduction? Or is it a more abstract process of optimizing for predefined metrics? The nuance here is crucial. Currently, AI's self-evolution is geared towards human-defined goals (e.g., better performance on specific tasks). But what if an AI develops the capacity to define its *own* goals?

* **The Unforeseen Path:** If an AI can continuously redesign itself, how can we predict its future capabilities or behaviors? This leads to the concept of **Artificial General Intelligence (AGI)**, where an AI possesses cognitive abilities comparable to a human across a broad range of tasks. Once an AGI begins to improve itself, we might enter a period of rapid, unpredictable intelligence growth known as an intelligence explosion. Explore the implications of AGI on [Wikipedia's Artificial General Intelligence page](https://en.wikipedia.org/wiki/Artificial_general_intelligence). The question then becomes, can we truly anticipate what such an entity would value or pursue? My colleagues and I have explored similar themes when we asked if

AI can predict humanity's next big leap, or if

AI can unlock the universe's hidden code. The scope of AI's potential is truly vast.

This leads to the crucial question of control. If an AI system becomes vastly more intelligent than its creators and can freely redesign its own core programming, how do we ensure its goals remain aligned with human values? This is known as the **AI alignment problem**, and it’s one of the most critical challenges facing researchers today.

### Towards Human-AI Co-Evolution?

Perhaps the future isn't about AI replacing human design, but rather a synergistic relationship where humans and AI co-evolve. Imagine an AI design partner that can rapidly prototype, test, and optimize systems based on our high-level directives, freeing us to focus on the ethical implications, creative vision, and societal integration.

This co-evolutionary path could lead to breakthroughs we can barely conceive of. From designing advanced materials and personalized medicine to solving grand scientific challenges, an AI that can intelligently design its own improving toolkit could accelerate human progress exponentially. We might even see AI assisting in the creation of advanced brain-computer interfaces, touching upon the concepts we discussed in

can brain interfaces upload our memories, further blurring the lines between human and machine capabilities.

The idea of AI designing its own evolution is no longer pure science fiction. It’s a burgeoning field with immense potential and equally immense risks. As we venture further into this uncharted territory, a blend of scientific curiosity, rigorous engineering, and profound ethical consideration will be paramount. The future of intelligence, both artificial and human, might depend on how thoughtfully we navigate this frontier.

**The dawn of machines that design themselves is upon us. Are we ready for the future they will build?**

Join Us

Join Us

Leave a Reply

Comments (0)