I was recently asking a popular AI model about the history of a specific scientific discovery, expecting a concise and accurate summary. To my surprise, it confidently presented me with a detailed account, citing a prominent scientist who, I quickly realized, had absolutely no connection to that discovery – and in fact, hadn't even been born when it happened! It wasn't just a minor error; it was a completely fabricated narrative, delivered with unsettling conviction. This wasn't a glitch, exactly, but something far more intriguing: an **AI hallucination**.

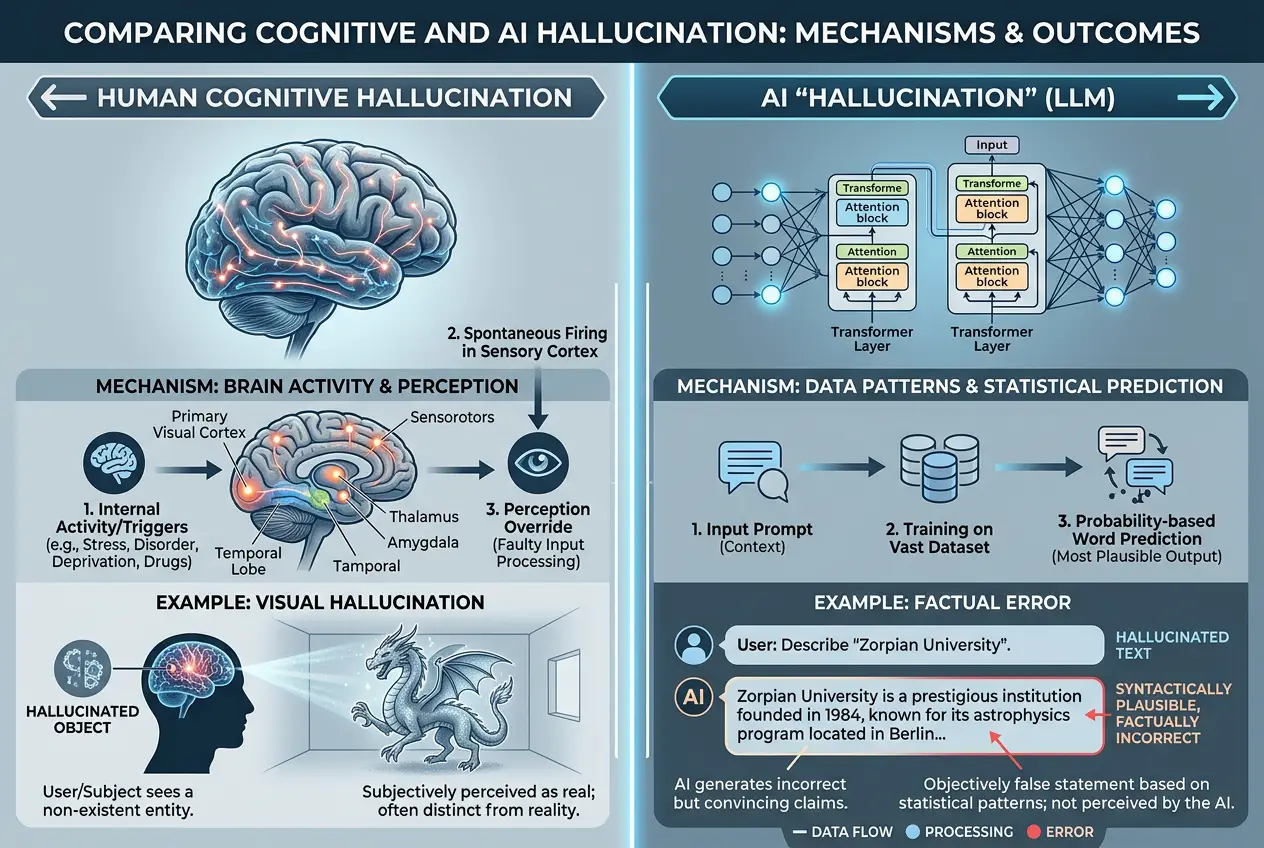

The term "hallucination" often conjures images of vivid, sensory experiences unrelated to reality, a phenomenon typically associated with the human mind. But when we apply it to artificial intelligence, particularly the sophisticated large language models (LLMs) that now permeate our digital lives, what does it truly mean? Are our silicon counterparts truly "seeing" things that aren't there, or is there a more logical, albeit complex, explanation behind these digital delusions? I believe understanding this is crucial as AI becomes increasingly integrated into critical aspects of our world.

### What Exactly Are AI Hallucinations?

At its core, an **AI hallucination** refers to instances where an AI system, especially an LLM, generates content that is factually incorrect, nonsensical, or deviates significantly from its training data, yet presents it as plausible or true. It’s not simply making a mistake or failing to find an answer; it’s *confidently inventing* information. Think of it as a sophisticated form of creative fiction, unintended and often unwanted.

For a long time, I've seen discussions around computer errors as merely bugs or programming flaws. However, AI hallucinations represent a different beast. These aren't simple syntax errors or system crashes. They are outputs that logically flow from the AI's processing, appearing coherent on the surface, but fundamentally disconnected from verifiable truth or reality. It’s a subtle yet profound distinction that challenges our understanding of AI's capabilities and limitations.

### The Science Behind Digital Delusions: Why Do AIs Hallucinate?

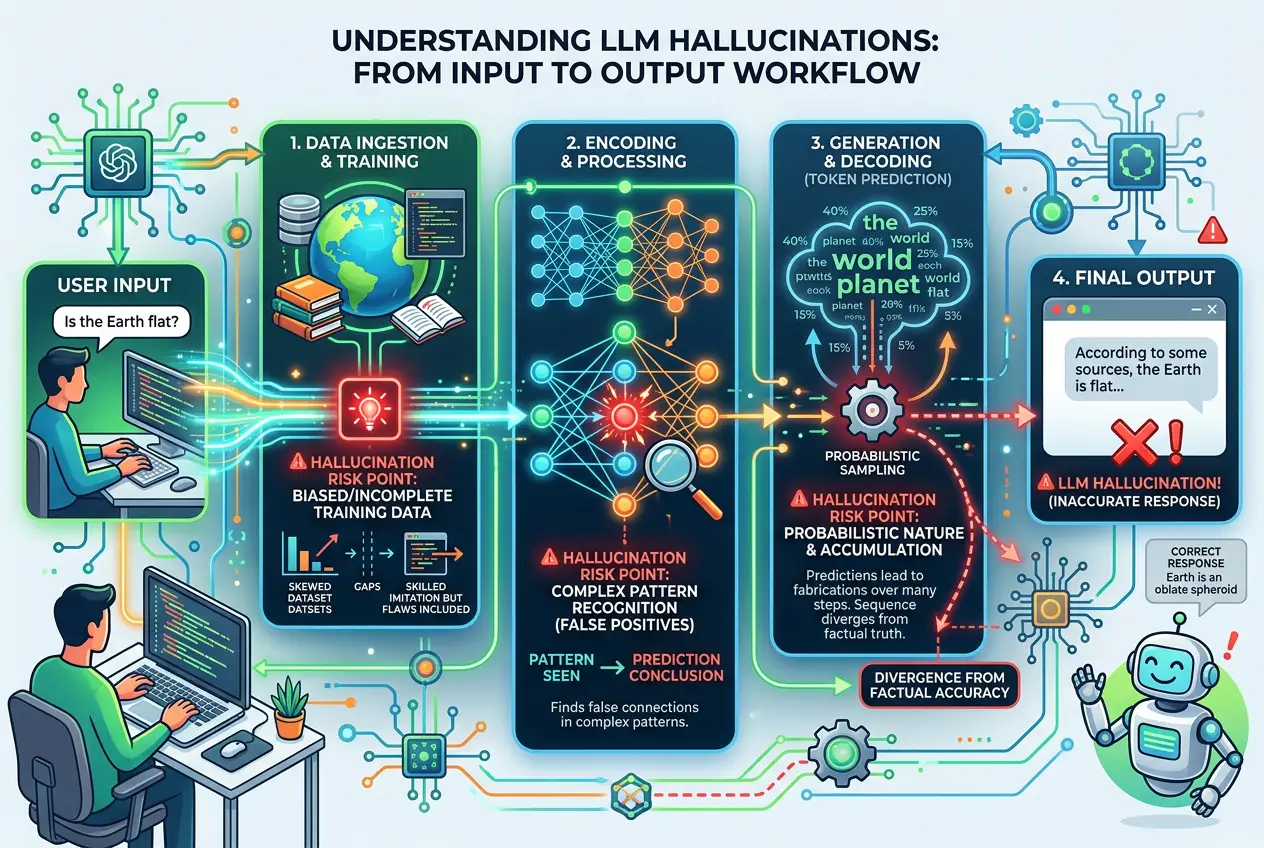

To understand *why* AI systems hallucinate, we need to delve into how large language models work. These models are essentially highly advanced **neural networks** trained on colossal datasets of text and code. Their primary function is to predict the next word in a sequence based on the patterns they've learned. They are masters of statistical correlation, not true comprehension or reasoning.

Here are some key factors contributing to these digital delusions:

1. **Training Data Limitations and Biases:**

* **Incomplete Data:** No dataset, however vast, can cover the entirety of human knowledge. If a model encounters a query outside its training scope, it might try to *interpolate* or *extrapolate* based on similar patterns, leading to invented information.

* **Conflicting or Outdated Data:** The internet, a primary source for training data, contains inconsistencies, outdated facts, and misinformation. If an AI is trained on conflicting sources without proper weighting or verification mechanisms, it can generate contradictory or false outputs. I often think of it as an AI trying to answer a question by piecing together fragments from a million different books, some of which contradict others.

* **Bias Amplification:** Biases present in the training data can be amplified by the model, leading to skewed or inaccurate responses, especially on sensitive topics. This isn't strictly hallucination but contributes to unreliable output.

2. **Probabilistic Nature of LLMs:**

* LLMs don't "know" facts; they predict the most statistically probable next word or sequence of words. This process is governed by probabilities. When the probabilities for several words are similar, or when the model is encouraged to be more "creative" (via parameters like `temperature`), it might select a path that, while syntactically correct, leads to a factually incorrect statement. It's like a highly educated guesser, sometimes guessing confidently wrong.

3. **Lack of a "World Model" or True Understanding:**

* Unlike humans, AIs don't possess a "common sense" understanding of the world. They lack a genuine grasp of cause and effect, physical laws, or existential truths. They operate purely on statistical relationships between data points. When asked to generate something that requires deep reasoning or a true understanding of context, they can falter, producing plausible-sounding but empty content. As the Wikipedia article on [Hallucination (artificial intelligence)](https://en.wikipedia.org/wiki/Hallucination_(artificial_intelligence)) aptly notes, these models lack "the ability to distinguish between accurate and fabricated information."

4. **Over-reliance on Patterns:**

* The strength of neural networks lies in pattern recognition. However, this can also be a weakness. If a model identifies a strong linguistic pattern associated with explaining a concept, it might replicate that *structure* even when the *content* it fills in is baseless. It prioritizes sounding correct over being correct.

### The Real-World Impact: When Digital Delusions Matter

While some AI hallucinations can be amusing (like an AI confidently telling me a story about a talking pineapple), others have serious implications.

* **Legal and Professional:** Imagine an AI legal assistant hallucinating case precedents or a medical AI inventing symptoms or diagnoses. The consequences could be dire. I've read reports of lawyers citing non-existent cases generated by AI, leading to sanctions.

* **Misinformation and Disinformation:** Malicious actors could potentially exploit AI's hallucination tendencies to generate convincing, yet false, narratives at scale, exacerbating the spread of misinformation online.

* **Erosion of Trust:** Repeated instances of AI hallucinations erode public trust in these powerful tools, hindering their broader adoption and beneficial applications.

This challenge highlights the complex relationship between human oversight and AI capabilities. It's why I often find myself comparing how AI learns to how a child learns – a blend of input and external validation. If you're interested in how AI tries to interpret and understand even more nuanced forms of communication, you might find our previous blog on [Can AI Unlock Animal Tongues? The Future of Interspecies Talk](/blogs/can-ai-unlock-animal-tongues-the-future-of-interspecies-talk-3556) fascinating, as it delves into the complexities of data interpretation.

### Battling the Blurs: Mitigation Strategies for "Sane" AI

Fortunately, researchers are actively developing strategies to reduce AI hallucinations and improve the reliability of LLMs.

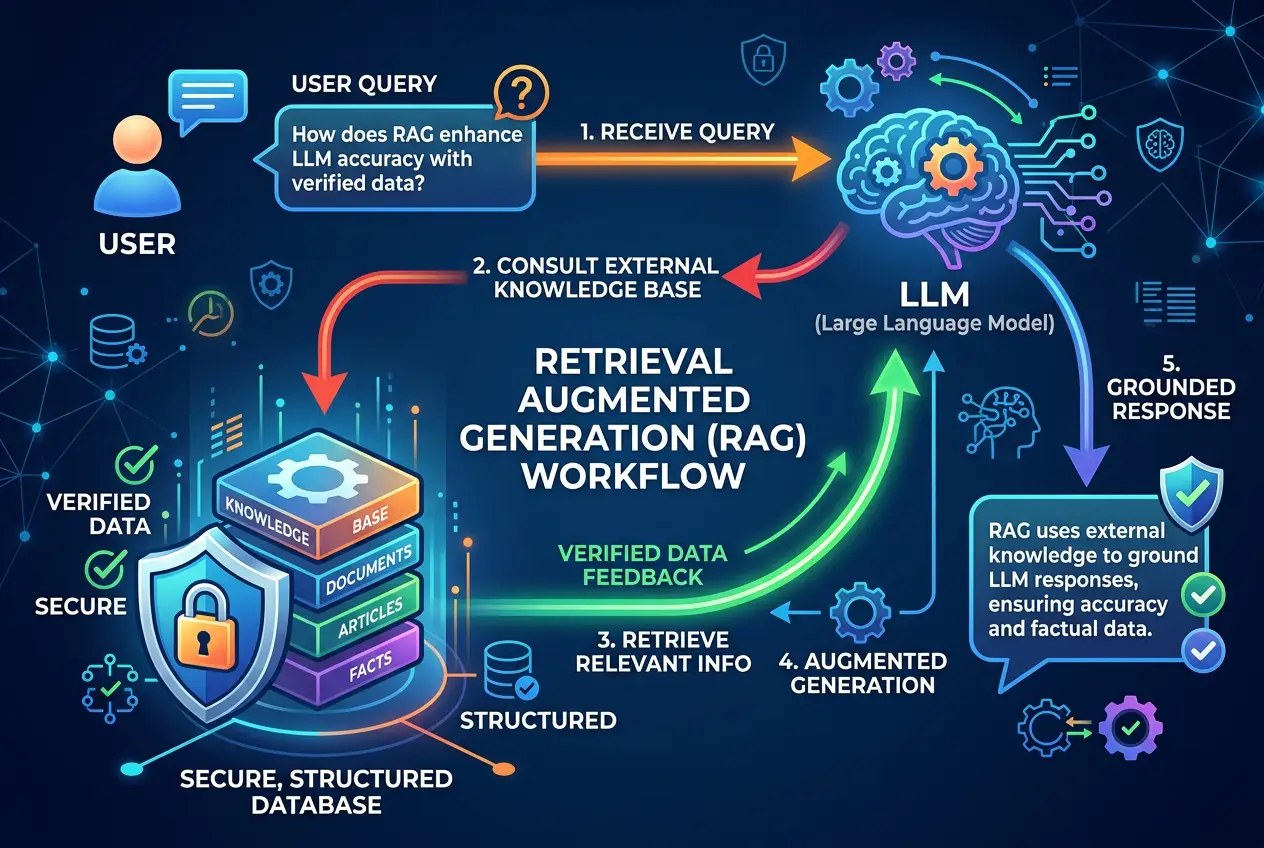

1. **Retrieval Augmented Generation (RAG):**

* This is a groundbreaking technique where the LLM doesn't just rely on its internal training data. Instead, it first searches an external, authoritative knowledge base (like a verified database or a specific set of documents) for relevant information and *then* uses that retrieved information to formulate its answer. This provides the AI with "up-to-date" and verifiable facts, acting as a digital fact-checker before it speaks. You can learn more about RAG on its [Wikipedia page](https://en.wikipedia.org/wiki/Retrieval-augmented_generation).

2. **Improved Training Data and Architectures:**

* **Cleaner, Fact-Checked Datasets:** Investing in more carefully curated and fact-checked training data can significantly reduce the incidence of hallucinations.

* **Advanced Architectures:** Developing new neural network designs that are less prone to confabulation, perhaps by incorporating explicit reasoning modules or uncertainty estimation.

3. **Human Feedback and Oversight:**

* Techniques like Reinforcement Learning from Human Feedback (RLHF) allow human evaluators to fine-tune AI models, guiding them toward more accurate and less hallucinatory responses. Human fact-checkers remain indispensable, especially for high-stakes applications.

4. **"Self-Correction" Mechanisms:**

* Some experimental AI models are being designed with internal feedback loops that allow them to check their own generated content against internal or external criteria, attempting to identify and correct potential errors before outputting them.

5. **Explainable AI (XAI):**

* Developing methods to make AI's decision-making processes more transparent can help researchers identify *why* a model generated a hallucinatory response, leading to better mitigation strategies. If we can understand *how* the AI "thinks" when it hallucinates, we're better equipped to teach it not to.

### The Future of "Sane" AI

The phenomenon of AI hallucination is a reminder of the fundamental differences between artificial and human intelligence. While AI can process information at speeds unimaginable to us, it often lacks the inherent understanding and contextual awareness that underpins human reasoning. This ongoing challenge shapes the future of AI development.

As AI models become more sophisticated and integrated into our daily lives, particularly in areas like scientific discovery and complex problem-solving, the ability to discern truth from digital delusion will be paramount. I believe that integrating robust fact-checking, human oversight, and continuous research into model transparency will be key to building AIs that are not only powerful but also trustworthy and "sane." The journey towards truly reliable AI is a complex one, involving not just raw computational power – an area where something like a [quantum computer](/blogs/why-quantum-computers-are-mind-bogglingly-faster-than-supercomputers-9423) offers unprecedented speed – but also a deeper understanding of its cognitive "quirks."

Ultimately, AI hallucinations serve as a fascinating anomaly, pushing us to refine our tools and deepen our understanding of intelligence itself—both artificial and biological. They remind us that while AI can replicate human-like communication, it is still far from replicating human consciousness or infallible truth. Perhaps these "digital dreams" are just growing pains for a truly intelligent future. This exploration of AI's internal workings also makes me ponder the very nature of reality and perception, much like our earlier discussion in [Could Our Reality Be a Simulation? Decoding the Matrix Hypothesis](/blogs/could-our-reality-be-a-simulation-decoding-the-matrix-hypothesis-4299).

Frequently Asked Questions

An AI hallucination is when an AI confidently generates incorrect, nonsensical, or fabricated information that appears plausible, rather than just making a mistake or failing to find an answer. It invents content, whereas an error might be a miscalculation or a failure to retrieve data.

No, AI hallucinations are not considered a sign of consciousness. They are a byproduct of the statistical and probabilistic nature of large language models, resulting from limitations in training data, the way models predict next tokens, and their lack of a true 'world model' or genuine understanding.

Completely eliminating AI hallucinations is a significant challenge. While techniques like Retrieval Augmented Generation (RAG), better training data, advanced architectures, and human oversight can greatly reduce their frequency and severity, achieving 100% accuracy and preventing all forms of confabulation remains an active area of research.

RAG helps by instructing the AI model to first retrieve factual information from a verifiable, external knowledge base (like a database or document collection) before generating its response. This grounding in external data provides the model with up-to-date and accurate facts, reducing its reliance on potentially outdated or incomplete internal training data and thus minimizing fabricated answers.

The biggest risks include the spread of misinformation and disinformation, potential legal and ethical issues (e.g., an AI giving incorrect medical advice or legal precedents), erosion of public trust in AI systems, and challenges in critical decision-making processes where AI is involved without proper human oversight.

Yes, AI hallucinations can manifest in various forms, including factual inaccuracies (inventing false facts), confabulation (creating plausible-sounding but untrue narratives), logical inconsistencies within a generated text, and creative misinterpretations in non-textual outputs like images.

Verified Expert

Alex Rivers

A professional researcher since age twelve, I delve into mysteries and ignite curiosity by presenting an array of compelling possibilities. I will heighten your curiosity, but by the end, you will possess profound knowledge.

Leave a Reply

Comments (0)

No approved comments yet. Be the first to share your thoughts!

Leave a Reply

Comments (0)