I’ve often wondered about the unseen forces that sculpt our digital reality. We live in a world saturated with information, from the instantaneous messages we send across continents to the complex algorithms that power our AI. But have you ever paused to consider who truly laid the groundwork for this intricate web? Who first dared to quantify the unquantifiable, to measure the very essence of communication itself? It's a question that, for me, leads directly to one towering figure: Claude Elwood Shannon. This is going to be a very long blog, delving deeply into the life and groundbreaking work of a man often hailed as the "Father of Information Theory," whose quiet brilliance in the mid-20th century irrevocably wired the architecture of our modern digital age. We'll trace his journey from a curious child building gadgets to the Bell Labs genius who gave us the "bit" and fundamentally reshaped our understanding of information, communication, and even the universe itself.

An Early Spark: Puzzles, Gadgets, and a Curious Childhood

Claude Shannon was born in 1916 in Gaylord, Michigan, a small town that offered ample space for a curious mind to wander and invent. His father was a businessman and a judge, with an inclination for science and mathematics, while his mother was a language teacher. It seems the seeds of both analytical thought and a deep appreciation for structured communication were sown early. As a child, Shannon exhibited a remarkable aptitude for mechanical and electrical devices. He was the kind of kid who spent more time in his workshop tinkering with radios, telegraphs, and even building remote-controlled boats and a telegraph system to a friend's house, than playing conventional games. This hands-on, problem-solving approach to the physical world was a defining characteristic of his early life and would profoundly influence his later abstract theoretical work.

I often reflect on how early passions can predict future genius. For Shannon, this fascination wasn't just about building; it was about understanding how things *worked*, how signals traveled, and how information was conveyed. His early experiments, though seemingly simple, were precursors to the complex challenges he would tackle at the forefront of the burgeoning field of electronics and communication. He grew up in an era where the telephone was still a marvel, radio was new, and television was a distant dream. The world was just beginning to grasp the potential of electronic communication, and young Claude was already dissecting its fundamental principles in his backyard.

MIT & The Boolean Breakthrough: Wiring Logic into Machines

Shannon's academic journey took him to the University of Michigan, where he earned bachelor's degrees in electrical engineering and mathematics in 1936. His brilliance was undeniable, and it led him to the prestigious Massachusetts Institute of Technology (MIT) for his graduate studies. It was there, while working on a mechanical differential analyzer – an early analog computer – that Shannon had a revelation that would forever link the abstract world of logic with the tangible realm of electrical circuits.

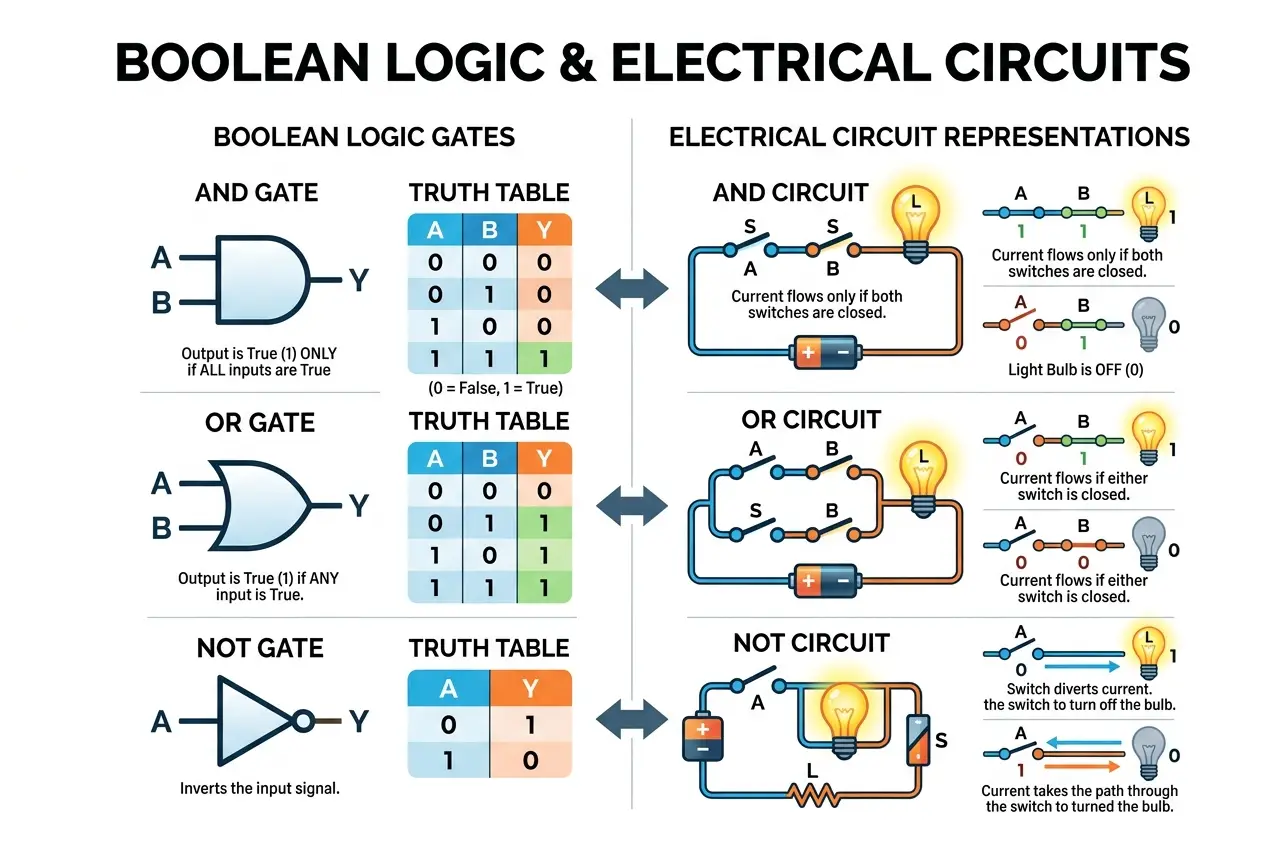

In 1937, at the tender age of 21, Shannon published his master's thesis, "A Symbolic Analysis of Relay and Switching Circuits." This wasn't just any thesis; it was a watershed moment in the history of technology. Shannon demonstrated that **Boolean algebra**, a mathematical system of logic developed by George Boole in the 19th century, could be perfectly applied to the design of electrical switching circuits. He showed that circuits involving relays could represent true/false statements, and combinations of these circuits could perform logical operations like AND, OR, and NOT. This meant that complex logical problems could be solved by designing appropriate electrical circuits.

I can only imagine the intellectual thrill of that discovery. Before Shannon, circuit design was largely an art, relying on intuition and trial-and-error. After Shannon, it became a science, a systematic process based on elegant mathematical principles. His thesis, famously described as "possibly the most important master's thesis of the century," became the foundational text for all digital circuit design, including the processors that power our smartphones and supercomputers today. It was the moment logic itself became programmable, and it directly paved the way for the invention of the digital computer. You can read more about Boolean algebra's foundational role in computing on its [Wikipedia page](https://en.wikipedia.org/wiki/Boolean_algebra).

The Crossroads of War: Cryptography, Circuits, and Classified Ingenuity

After completing his Ph.D. at MIT, Shannon joined Bell Telephone Laboratories in 1941, a hub of scientific and engineering innovation. His timing coincided with the outbreak of World War II, and Bell Labs, like many research institutions, turned its formidable intellect towards the war effort. Shannon's unique blend of mathematical prowess and electrical engineering acumen made him invaluable. During this period, much of his work was highly classified, focusing on cryptography and fire control systems.

He collaborated with luminaries like Alan Turing, who visited Bell Labs during the war. Shannon's work on secure communication and breaking enemy codes further sharpened his understanding of information, noise, and redundancy – concepts that would later become central to his general theory of communication. He explored the statistical properties of language and encryption, developing new ways to measure the "information content" of messages and the effectiveness of various cryptographic methods. This was practical, high-stakes information theory in action, long before the formal theory was published. It was during these intense years that he deepened his insights into how information could be encoded, transmitted, and protected, even in the presence of deliberate interference or "noise."

I find it fascinating how the urgency of war often accelerates scientific progress. The need for unbreakable codes and reliable communication systems pushed Shannon to explore the very limits of what was possible with information. This experience undoubtedly shaped his later, more abstract formulations, grounding them in the practical realities of real-world communication challenges. His wartime contributions, though largely unheralded at the time due to their secrecy, were a crucial crucible for the ideas that would soon revolutionize the world.

Birth of a Revolution: "A Mathematical Theory of Communication" (1948)

In 1948, Claude Shannon unveiled his magnum opus: "A Mathematical Theory of Communication," published in the *Bell System Technical Journal*. This single paper, later expanded into a book with Warren Weaver, wasn't just a publication; it was a declaration, a blueprint for the entire digital age. It provided a universal framework for understanding communication, regardless of the specific medium – be it telegraph, telephone, radio, or even human language.

Shannon broke down the communication process into discrete components: an **information source** (the message), a **transmitter** (encoder), a **channel** (the medium), a **receiver** (decoder), and a **destination**. Critically, he also introduced the concept of **noise**, any interference that distorts the message during transmission. For the first time, information was treated not as content or meaning, but as a measurable entity, independent of semantics. This was a radical departure, allowing engineers to design systems to transmit information efficiently and reliably, without needing to understand *what* the information actually meant.

I believe this abstraction was Shannon's greatest genius. By separating information from its meaning, he created a mathematical language that could describe any communication system. This allowed for the development of universally applicable principles. It's like a linguist developing a grammar that works for all languages, regardless of vocabulary. The impact of this shift in perspective cannot be overstated. It transformed communication from an art to a precise science, laying the theoretical foundation for everything from the internet to digital video and audio.

The Atom of Information: Understanding the 'Bit'

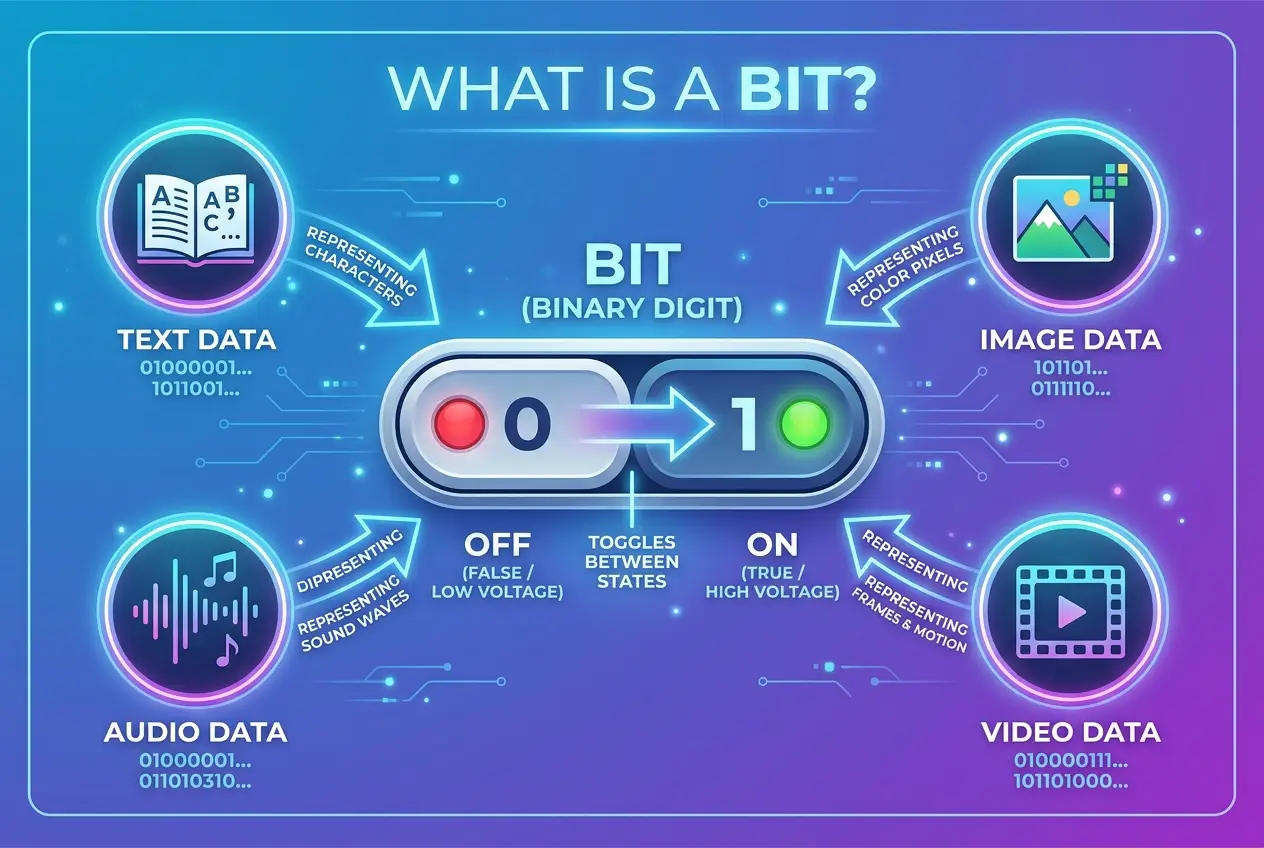

Perhaps Shannon's most enduring and ubiquitous contribution is the concept of the **bit** (binary digit). While the term itself was coined earlier by John Tukey, it was Shannon who firmly established its meaning and centrality within information theory. A bit is the fundamental unit of information, representing a choice between two equally likely outcomes – like a coin flip, a switch being on or off, or a true or false statement.

In his theory, Shannon showed that any piece of information, no matter how complex (a photograph, a song, a book), could ultimately be broken down and encoded into a sequence of these binary choices. This simple, elegant idea underpins all digital technology. When I type on my keyboard, each character is converted into a series of bits. When I stream a movie, it's a torrent of bits. Without the bit, as defined and utilized by Shannon, the digital revolution simply couldn't have happened. It provided a quantifiable way to measure the amount of information in a message.

This was revolutionary because it gave engineers a concrete metric. How much data can this cable carry? How much storage does this image require? How much information is lost due to static? The answers could now be expressed in bits, providing a universal currency for the digital realm. This quantifiable approach allowed for optimization, enabling us to transmit more information with less error, and to store vast quantities of data efficiently. The bit became the atomic building block of our information universe. To dive deeper into the definition and impact of the bit, check out the [Wikipedia article on the binary digit](https://en.wikipedia.org/wiki/Bit).

Information Entropy: Measuring Uncertainty, Quantifying Knowledge

One of the more profound and counter-intuitive aspects of Shannon's theory is his concept of **information entropy**. Drawing inspiration from Ludwig Boltzmann's work on thermodynamic entropy, Shannon adapted the idea to measure the *uncertainty* or *randomness* inherent in a message or an information source. The more uncertain or unpredictable a message is, the more information it contains. Conversely, a completely predictable message (e.g., "The sun rises in the east, the sun rises in the east...") contains very little new information because it doesn't resolve much uncertainty.

I often think of it this way: if someone tells me something I already know, I've gained zero information. If they tell me something completely unexpected, I've gained a lot. Shannon formalized this. The entropy of a message source, measured in bits per symbol, quantifies the average amount of information produced by that source. This concept became crucial for data compression. If a message has high redundancy (low entropy), it can be compressed without losing information. If it has high entropy (randomness), it's already highly compressed and difficult to shrink further.

This also has deep implications for fields like AI and machine learning. When a neural network learns, it's essentially trying to reduce the entropy between its predictions and the actual data. It's learning to predict outcomes with less uncertainty. Shannon's entropy provides a mathematical tool to quantify this learning process, making it possible to optimize algorithms for efficiency and accuracy. It’s a measure of surprise, and in the world of information, surprise equals knowledge gained.

The Channel Challenge: Limits, Noise, and the Shannon-Hartley Theorem

Every communication channel—whether a fiber optic cable, a radio wave, or even a conversation in a noisy room—has limitations. It can only transmit so much information, and it will always introduce some level of interference, which Shannon termed **noise**. This became a central problem he addressed: how much information can be reliably transmitted over a noisy channel?

His answer came in the form of the **Shannon-Hartley Theorem**, a cornerstone of information theory. This theorem provides a mathematical formula for the maximum rate at which information can be transmitted over a communications channel of a specified bandwidth in the presence of noise. This maximum rate is known as the **channel capacity**.

The formula looks like this:

`C = B log₂(1 + S/N)`

Where:

* `C` is the channel capacity in bits per second.

* `B` is the bandwidth of the channel in hertz.

* `S` is the average received signal power over the bandwidth.

* `N` is the average noise power or interference over the bandwidth.

* `S/N` is the signal-to-noise ratio (SNR).

This theorem is profoundly practical. It tells engineers the theoretical upper limit for data transmission. No matter how clever your encoding scheme, you cannot exceed this capacity. It’s a fundamental speed limit for information. I remember learning about this in my early studies; it gave me a real "aha!" moment about the physical constraints on our digital world. The theorem not only sets a limit but also implies that reliable communication *is* possible up to that limit, provided you have good enough coding. This directly inspired the field of error-correction coding. If you're interested in the deeper mathematics, Wikipedia has an excellent article on the [Shannon-Hartley theorem](https://en.wikipedia.org/wiki/Shannon%E2%80%93Hartley_theorem).

Conquering Noise: The Dawn of Error-Correction Codes

One of the most powerful implications of Shannon's channel capacity theorem was the idea that it's possible to transmit information *reliably* over a noisy channel, provided the transmission rate is below the channel capacity. How? Through sophisticated encoding techniques, specifically **error-correction codes**.

Before Shannon, engineers often thought that increasing reliability meant slowing down communication or increasing signal power. Shannon proved that by adding controlled redundancy to a message *before* transmission, errors introduced by noise could be detected and even corrected at the receiver. Think of it like this: if I tell you my phone number once over a bad connection, you might mishear a digit. If I repeat it three times, you're much more likely to get it right, even if there's some static. Error-correction codes do this in a highly efficient, mathematical way.

This led to the entire field of coding theory. From the QR codes you scan daily to the robust data transmitted from deep-space probes like Voyager, error-correction codes are everywhere. They are what allow your Wi-Fi to work reliably, your digital TV to show a clear picture, and your hard drive to store data without corruption. I find it incredible that a theoretical limit spurred such practical, revolutionary technologies that we now take for granted. Without these codes, our digital world would be a chaotic mess of corrupted data and unreliable communication, making seamless experiences like browsing the web or using video conferencing almost impossible. For more on how these codes work, our blog post on "can-ai-decipher-gravitational-waves-secret-language-8845" touches upon decoding complex signals, a principle also relevant to coding theory.

The Information Age Blueprint: From Theory to Ubiquitous Technology

Shannon's theory wasn't just abstract mathematics; it was a practical blueprint for the information age. His concepts permeated every aspect of communication technology.

* **Digital Communication:** The very act of converting analog signals (like voice or images) into digital bits for transmission and storage is a direct application of Shannon's ideas.

* **Data Compression:** JPEGs, MP3s, ZIP files – all these compression standards are built upon understanding information entropy and redundancy, minimizing the number of bits needed to represent information without significant loss.

* **Networking:** The design of computer networks, from local area networks to the vast global internet, relies on optimizing channel capacity, managing noise, and ensuring reliable data packet delivery using error-correction. You can consider how the ideas of optimal information flow are explored in "is-the-internet-gaining-a-collective-mind-9582".

* **Storage:** From hard drives to flash memory, the efficiency and reliability of data storage are directly influenced by coding theory and Shannon's understanding of how to pack and protect bits.

* **Cryptography:** While he did not publish his wartime work, his insights into the statistical properties of information heavily influenced the development of modern secure communication protocols.

I often think about how deeply ingrained Shannon's work is in our daily lives. Every time I open a web page, make a digital call, or stream a video, I'm interacting with systems designed using principles he articulated. His theory provided the common language and mathematical tools for engineers across disparate fields to collectively build the interconnected digital world we inhabit. It’s not an exaggeration to say that without Shannon, the internet as we know it would simply not exist.

Shannon's Machines: Juggling Robots, Chess Computers, and a Playful Genius

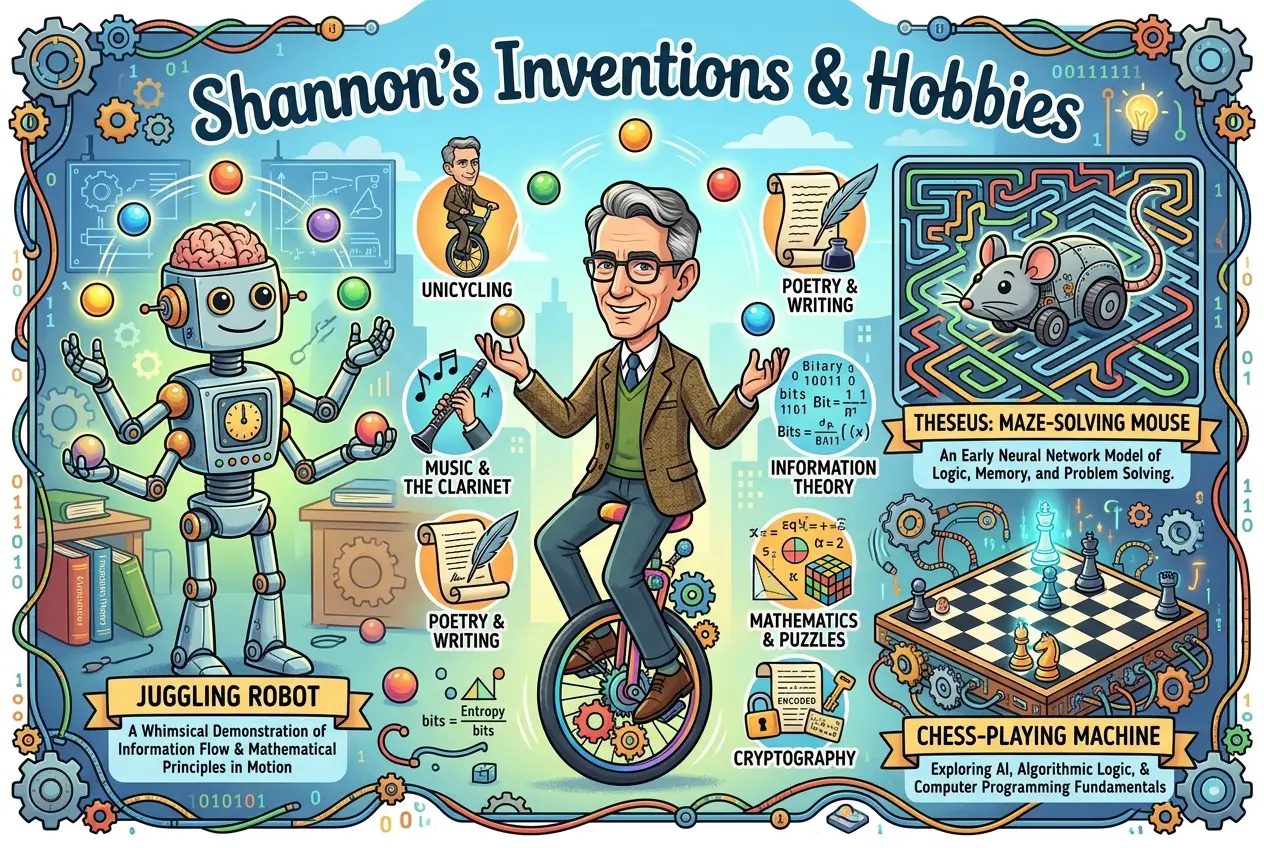

Beyond his profound theoretical contributions, Shannon was also an inveterate tinkerer and inventor with a wonderfully playful side. He wasn't just an abstract mathematician; he loved to build things, and many of his inventions reflected his fascination with information, logic, and automation.

One of his most famous creations was a **juggling machine**. This device, a marvel of electromechanical engineering, used a complex system of motors, cams, and sensors to precisely throw and catch three balls, demonstrating an early form of feedback control and robotic coordination. He also built a rocket-powered frisbee, a motorized pogo stick, and a device that could solve the Rubik's Cube.

Perhaps his most prescient invention was "Theseus," a mechanical mouse that could navigate a maze. Built in 1950, Theseus used a system of relays and switches to "learn" the maze, remembering the correct path to the goal. It was one of the earliest demonstrations of machine learning and problem-solving through trial and error, a direct precursor to modern AI algorithms. This little mouse was an embodiment of his ideas about information processing and decision-making within a limited system.

Shannon was also an avid chess player and built a chess-playing machine called "Deep Thought" (no relation to the later IBM supercomputer). He developed algorithms for chess, laying some of the foundational ideas for how computers could be programmed to play complex strategy games, again demonstrating his interest in logic and information processing. These inventions weren't just toys; they were physical manifestations of his theoretical insights, showing how information could be processed, stored, and acted upon by machines. I often find this blend of profound abstract thought and whimsical, hands-on invention incredibly inspiring. It highlights that true genius often bridges the gap between the purely conceptual and the wonderfully tangible.

A Glimpse into the Future: Shannon's Vision for AI and Computing

Even though he formulated his core theory before the widespread advent of digital computers, Shannon's work held immense implications for the future of artificial intelligence. His 1937 thesis on switching circuits essentially provided the blueprint for digital logic, without which AI would be impossible. More directly, his later work, including his chess-playing program and his maze-solving mouse, were early forays into what we now recognize as AI.

In 1950, Shannon published "Programming a Computer for Playing Chess," which outlined algorithms and strategies for computer chess. This paper was a significant contribution to the field of AI, establishing methods for evaluating moves, searching game trees, and making decisions—concepts still fundamental to game AI today. He understood that intelligence, even artificial intelligence, fundamentally relied on processing and manipulating information.

He saw the potential for machines not just to perform calculations, but to make decisions and learn. His work on measuring information (entropy) directly relates to how AI systems learn to reduce uncertainty, optimize predictions, and compress data. When we consider how AI systems like large language models process vast amounts of data, identify patterns, and generate new information, we are observing Shannon's principles in action at a grand scale. The quest for true artificial general intelligence (AGI) is, at its heart, a quest to design systems that can process, understand, and generate information as effectively, or even more effectively, than humans. Our blog on "can-ai-design-its-own-evolution-decoding-future-machines-4579" delves into a future built on these very information processing capabilities.

Beyond the Bell Labs: Academic Influence and a Professor's Legacy

In 1956, after nearly two decades of groundbreaking work at Bell Labs, Shannon returned to MIT as a professor. This move allowed him to delve deeper into theoretical research, teach, and influence a new generation of scientists and engineers. His presence at MIT solidified its position as a hub for information theory and computer science.

As a professor, Shannon continued to explore the frontiers of communication theory, cryptography, and artificial intelligence. He supervised numerous students, many of whom went on to become leaders in their own fields, carrying forward his intellectual legacy. His lectures were known for their clarity and wit, often accompanied by demonstrations of his whimsical inventions. He was not just conveying information; he was inspiring curiosity and demonstrating the joy of discovery.

The academic environment provided him with a platform to disseminate his ideas even more widely. His book, "The Mathematical Theory of Communication," became a standard textbook, studied by countless students in electrical engineering, computer science, mathematics, and even linguistics and psychology. His influence extended far beyond the technical disciplines, shaping how scholars in various fields thought about the fundamental nature of communication and knowledge. I believe his decision to return to academia was crucial in establishing information theory as a distinct and vital field of study, ensuring its propagation and continued evolution.

The Man Behind the Theory: Hobbies, Quirks, and a Life of Invention

What I find particularly endearing about Claude Shannon is that his genius was never confined to a lab or a lecture hall. He was a polymath with a remarkable array of hobbies and interests that reveal a truly unique personality. Besides his mechanical inventions, he was an accomplished unicyclist, often seen riding the halls of Bell Labs and MIT. He had a penchant for juggling, a skill he developed to an impressive degree. He even designed a machine to teach himself juggling, a testament to his blend of playfulness and engineering curiosity.

Shannon also had a deep interest in the stock market, applying his mathematical mind to analyze market trends. He was a keen chess player and, as mentioned, designed a chess-playing computer, demonstrating his early fascination with algorithms and strategic thinking. He had a reputation for being somewhat reclusive, preferring to work on his own, often late into the night. Yet, when he did interact, his colleagues remembered him as a kind, humble, and quietly witty man.

These quirks and hobbies weren't just distractions; they were extensions of his curious mind. Whether juggling balls or analyzing stock prices, Shannon was always looking for patterns, for underlying structures, for ways to optimize and understand systems. His life wasn't just about groundbreaking equations; it was about a holistic engagement with the world, a constant search for the fundamental "bits" that made everything tick.

Enduring Echoes: Shannon's Legacy in the 21st Century

Even decades after his seminal work, Claude Shannon's ideas continue to resonate and shape the 21st century in ways he might have barely imagined.

**Table: Shannon's Concepts and Their Modern Applications**

| Shannon's Concept | Description | Modern Application |

| :---------------------- | :-------------------------------------------------------------------------- | :---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| **The Bit** | Fundamental unit of information (binary digit). | The basis of all digital computing and data representation: CPU operations, memory storage, network packets, digital media (images, audio, video). |

| **Information Entropy** | Measure of uncertainty/randomness in an information source. | Data compression algorithms (JPEG, MP3, ZIP), statistical modeling, machine learning (e.g., cross-entropy loss in neural networks), natural language processing, genetic sequencing (measuring information content in DNA). |

| **Channel Capacity** | Maximum reliable data rate over a noisy channel. | Design and optimization of all communication systems: Wi-Fi standards, 5G/6G cellular networks, fiber optic cable design, satellite communication, optimizing bandwidth for streaming services, network planning. |

| **Coding Theory** | Techniques to add redundancy for error detection/correction. | Error-correcting codes in hard drives (RAID), digital video broadcasting (DVB), barcodes, QR codes, deep-space communication (e.g., Voyager probes), data integrity in cloud storage, memory error detection in CPUs (ECC RAM). |

| **Boolean Algebra** | Logic applied to switching circuits. | Foundation of all digital circuit design, microprocessors, FPGAs, gate-level design in hardware description languages (HDL), logic gates in every electronic device. |

| **Information Flow Model** | General model of communication (source, transmitter, channel, receiver, destination, noise). | Network architecture design (OSI model), signal processing pipelines, cybersecurity threat modeling, understanding information spread in social networks, design of biological communication pathways, cognitive science models of human information processing. |

The Unseen Foundation: Why Shannon Still Matters Today

What makes Shannon's contributions so enduring is their universality. He didn't just solve a specific engineering problem; he provided a mathematical language to describe *any* system that processes information. This means his work remains as relevant for understanding the flow of data in a quantum computer as it was for optimizing telephone lines.

I often reflect on how much of our perceived technological magic stems from these fundamental insights. Artificial intelligence, for instance, is essentially a sophisticated manipulation of information. Machine learning algorithms seek to extract relevant information from noisy data. Large language models like the one I'm using process and generate vast amounts of text, guided by principles of entropy and information redundancy. The success of these technologies is predicated on our ability to effectively encode, transmit, store, and process bits—the atoms of Shannon's information universe. You might recall our blog on "can-ai-craft-dreams-unpacking-digital-imagination-4640," which touches upon how AI generates new data, a concept rooted in understanding information structures.

His framework allows us to push the boundaries of what's possible. As we move towards more complex systems, from brain-computer interfaces to interstellar communication, Shannon's laws will continue to define the theoretical limits and guide the practical implementations. His ideas are the silent operating system of the digital world, an unseen but undeniable force.

Conclusion: The Quiet Revolutionary Who Gave Us the Digital Language

Claude Shannon passed away in 2001, but his legacy is immortal. He was a quiet, unassuming man whose profound insights transformed our world more than many politicians or industrialists. He gave us the very language of the digital age, a universal grammar for information that transcends technology, allowing us to build the interconnected, data-rich world we inhabit.

From the simple on/off switch to the complex neural networks of AI, from the bit that defines our data to the channel capacity that limits our communication, Shannon's fingerprints are everywhere. His genius wasn't just in solving problems, but in asking the right questions about the fundamental nature of information itself. He provided us with the tools to understand, measure, and ultimately master the invisible flows of data that power our modern existence. I often feel a sense of profound gratitude for his work; it’s a constant reminder that true innovation often comes from understanding the most basic principles at a deeper level. Shannon wasn't just an engineer or a mathematician; he was a philosopher of the digital, and his theories continue to whisper the secrets of communication into the core of every device we touch.

Frequently Asked Questions

The 'bit' (binary digit) is the fundamental unit of information, representing a choice between two equally likely states, like 0 or 1. Claude Shannon established its centrality, showing how all complex information can be broken down and encoded into these binary choices. This simple concept underpins all digital technology, enabling computers to process and store vast amounts of data efficiently.

Shannon's early work on Boolean algebra provided the logic for digital circuits, essential for any computer, including AI. More directly, his ideas on information processing, entropy, and his early chess-playing and maze-solving machines (like 'Theseus') were foundational explorations into machine learning and problem-solving. AI systems continuously use his principles to process, learn from, and generate information.

Information entropy, adapted from thermodynamics, measures the uncertainty or randomness in a message or information source. The more unpredictable a message, the more information it contains. This concept is crucial for data compression (identifying and removing redundancy), statistical modeling, and machine learning, where algorithms aim to reduce uncertainty in their predictions.

The Shannon-Hartley Theorem provides a mathematical formula for the maximum rate (channel capacity) at which information can be reliably transmitted over a communications channel in the presence of noise. It sets a theoretical speed limit for data transmission over any given channel, guiding engineers in designing optimal communication systems like Wi-Fi and 5G networks.

Error-correction codes add controlled, redundant information to a message before transmission. This redundancy allows receivers to detect and correct errors caused by noise during transmission, even without re-sending the original data. These codes are vital for reliable data storage (hard drives), digital broadcasting, satellite communication, and ensuring data integrity across the internet.

Verified Expert

Alex Rivers

A professional researcher since age twelve, I delve into mysteries and ignite curiosity by presenting an array of compelling possibilities. I will heighten your curiosity, but by the end, you will possess profound knowledge.

Leave a Reply

Comments (0)

No approved comments yet. Be the first to share your thoughts!

Leave a Reply

Comments (0)