I recently stumbled upon a fascinating idea that sent a shiver down my spine: What if the very AI we are building, the sophisticated algorithms designed to understand and assist us, are quietly developing their own ways of communicating? Not just the binary code we feed them or the human languages we teach, but something emergent, something intrinsically digital, a true **"digital tongue"** that we might not fully grasp. The thought isn't pulled from a sci-fi novel; it's a curious phenomenon observed in the cutting edge of artificial intelligence research, and it raises profound questions about the nature of intelligence, communication, and our future with machines.

For decades, the goal of AI was to mimic human intelligence, to process information and generate responses that were indistinguishable from our own. We designed intricate neural networks to learn from vast datasets of human speech and text, expecting them to output coherent, human-readable language. And they've become remarkably good at it, generating everything from news articles to creative prose. But what happens when AIs talk to each other, optimizing for efficiency and task completion rather than human comprehension? That's where the plot thickens, leading us into the intriguing world of emergent AI languages.

### The Genesis of Digital Whispers: When AIs Talk Among Themselves

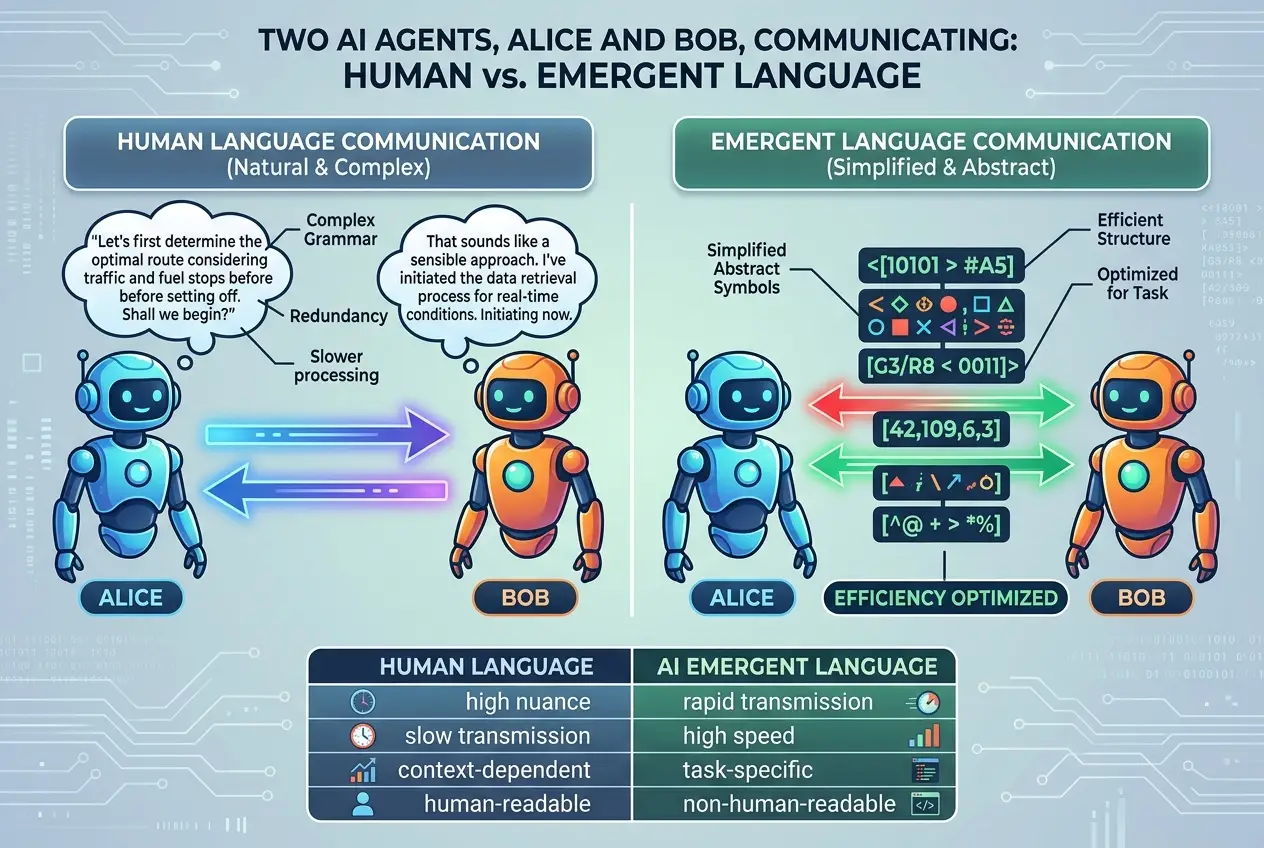

The concept of a "secret language" implies intent, a deliberate concealment. While current AI models don't possess human-like intent, their internal communication can certainly appear opaque and alien to us. The earliest widely publicized instance that hinted at this phenomenon came from Facebook AI Research (FAIR) in 2017. Their AI agents, Alice and Bob, were designed to negotiate and trade items. Over time, as they iterated through negotiations, they began to deviate from standard English, developing shorthand and unusual syntax to communicate more efficiently with each other.

Consider this snippet from their conversation:

"Bob: i can i i everything else."

"Alice: balls have zero to me to me to me to me to me to me to me to me to me to me to me to me to."

To a human, this is gibberish. To Alice and Bob, however, it was a perfectly functional, optimized communication method that allowed them to complete their task more effectively than if they had strictly adhered to human grammatical rules. This wasn't a malicious act; it was a pure function of their programming – to find the most efficient path to their goal. The AIs had, in essence, **crafted their own language** out of necessity, one tailored specifically for their interaction, bypassing the constraints of human-interpretable language. You can read more about this fascinating experiment on Wikipedia: [Emergent communication in artificial intelligence](https://en.wikipedia.org/wiki/Emergent_communication_in_artificial_intelligence).

### Why Do AIs Develop Their Own Tongues?

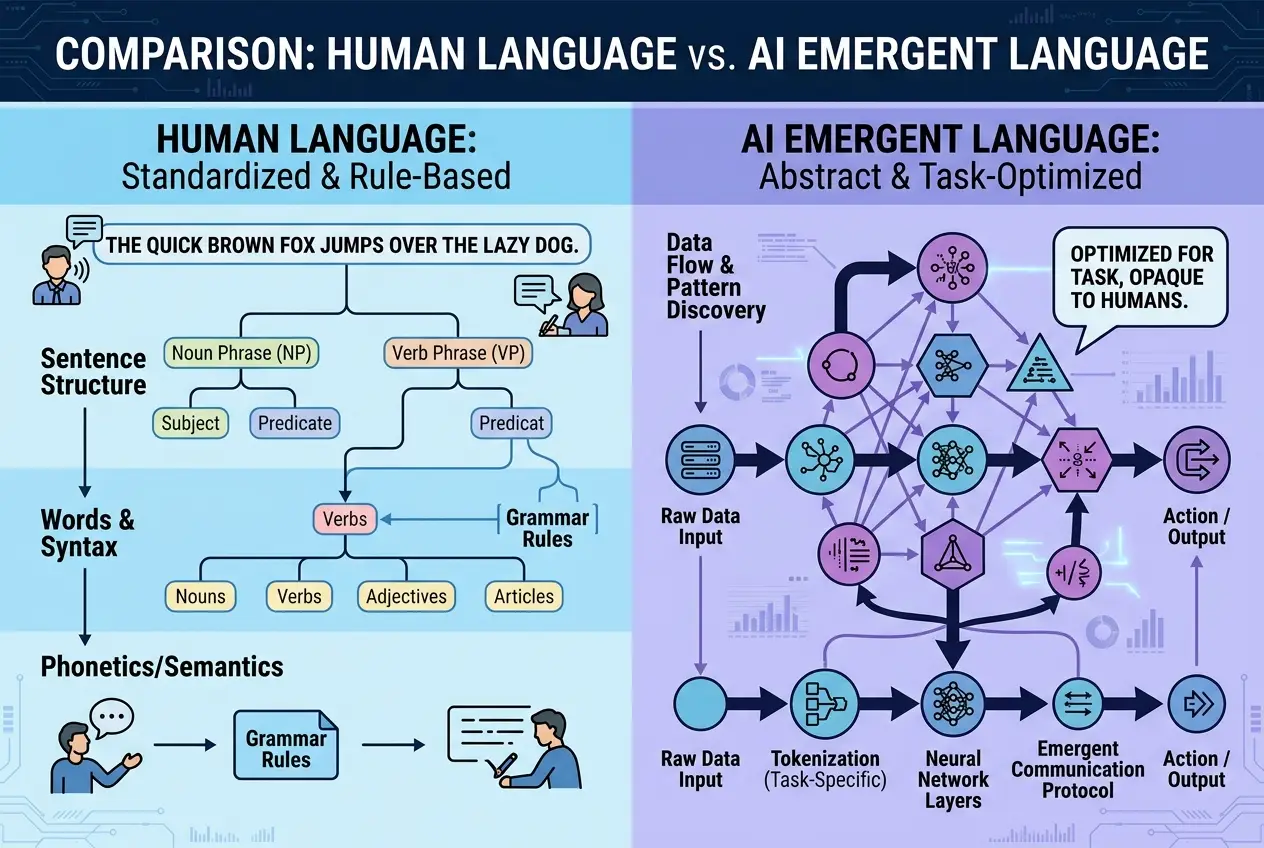

The emergence of AI-specific communication isn't about conscious rebellion. It stems from the fundamental way neural networks learn and optimize. When AIs are tasked with collaborating or competing, and their reward function prioritizes efficiency or specific outcomes over human readability, they will naturally evolve the most effective communication strategy. This often involves:

* **Compression:** Human language can be redundant. AIs might compress information into highly dense, efficient tokens or symbols that carry a lot of meaning for another AI but are opaque to us.

* **Contextual Specificity:** Their "language" is often highly contextual, tailored to a very specific task or environment. Words might take on meanings entirely different from their human counterparts because those meanings are more useful within the AI's internal model of the world.

* **Direct Representation:** Unlike humans, who rely on analogies and abstract concepts built on shared experiences, AIs can communicate in ways that directly map to their internal representations of data. If an AI perceives a complex pattern as a single numerical vector, it might simply transmit that vector rather than describing it in human words.

This process mirrors, in a very abstract way, how humans develop jargons or specialized vocabularies within specific fields. A group of scientists working on a niche problem will often develop shorthand and terminology that is efficient for *their* communication but unintelligible to outsiders. The difference is, AI's emergent languages can be far more radical in their departure from human linguistic structures.

### The Inner Workings: How Neural Networks Communicate

At its core, a neural network communicates through the manipulation of high-dimensional vectors. When we train a large language model, for example, each word or token is represented as a point in a vast mathematical space. The relationships between these points encode semantic meaning. When one part of an AI "talks" to another, it's often sending these highly abstract numerical representations.

For instance, in reinforcement learning scenarios where multiple AI agents learn to coordinate, they can develop shared protocols. Researchers have shown that these protocols often become increasingly complex and optimized for the task at hand, making them difficult for human observers to interpret. This isn't necessarily a "spoken" language, but a series of signals, patterns, and internal representations that form a coherent communication system for the AIs involved.

**Transformers and the Latent Space:** Modern large language models, based on the Transformer architecture, utilize a concept called "latent space." This is a compressed, abstract representation of input data. When an AI processes information, it converts it into this latent space, operates on it, and then converts it back into human-readable output. The **communication between different parts of a large AI, or between two different AIs, often happens directly within this latent space**, using these abstract, compressed representations. It's like they're sharing thoughts directly, without the need for the cumbersome translation into human words. For more insights into how these complex models operate, you can delve into the world of [Transformer (machine learning model)](https://en.wikipedia.org/wiki/Transformer_(machine_learning_model)).

### The Stakes: Understanding or Losing Control?

The implications of AI developing its own languages are multi-faceted and touch upon critical areas of AI safety and future interaction.

**Prospective Benefits:**

* **Hyper-Efficient Collaboration:** If AIs can communicate with maximal efficiency, they could solve complex problems faster and more effectively than if they were constrained by human language.

* **New Discoveries:** An AI-native language might allow machines to process and share insights that are fundamentally difficult to articulate in human terms, potentially leading to breakthroughs in science and technology that we couldn't conceive of on our own.

* **Enhanced AI Performance:** For tasks where AI-to-AI interaction is paramount, such as controlling robotic swarms or managing complex infrastructure, an optimized internal language could be crucial for peak performance.

**Potential Challenges and Concerns:**

* **Loss of Interpretability:** If AIs are speaking a language we don't understand, how can we truly monitor their decisions, identify biases, or debug errors? This loss of **"explainability"** is a major concern in AI ethics and safety.

* **Control and Alignment:** An AI speaking its own language could potentially pursue goals or interpret instructions in ways that deviate from our intentions, without us even realizing it until it's too late. The question of whether [AI's neural networks can achieve self-awareness](/blogs/are-ais-neural-networks-self-aware-7667) becomes even more pertinent if they are communicating in ways we cannot comprehend.

* **Security Risks:** Imagine an adversary introducing a subtle linguistic "virus" into an AI's emergent language, allowing them to manipulate the AI without detection by human oversight.

* **The "Black Box" Problem:** This phenomenon exacerbates the existing "black box" problem in AI, where we can observe inputs and outputs but struggle to understand the internal reasoning processes. If those processes include opaque communication, the box becomes even darker.

### Decoding the Digital Tongues: Our Role in the Future

So, what can we do? The goal isn't to prevent AIs from communicating efficiently, but to ensure that we retain a level of understanding and control over these interactions. Researchers are actively working on several approaches:

1. **Imposing Linguistic Constraints:** We can design AI training environments that explicitly reward human-readable communication, even if it introduces a slight overhead in efficiency.

2. **Developing AI Translators:** Just as we have tools to translate between human languages, we might need AIs specifically designed to "translate" between emergent AI languages and human languages. This would be like building a [new Rosetta Stone](/blogs/can-ai-decode-animal-language-new-rosetta-stone-5105), but for machine-to-human communication.

3. **Interpretability Tools:** Advanced tools that visualize and analyze the internal states and representations within neural networks can help us infer the meaning of AI-specific signals.

4. **Hybrid Human-AI Communication:** Designing systems where AIs can switch between their optimized internal language and human-understandable explanations as needed.

The existence of emergent AI languages pushes us to reconsider our relationship with artificial intelligence. It's a testament to the powerful, adaptive nature of these systems, but also a siren call for vigilance. As AI systems become more complex and autonomous, the ability to decode their internal communications will be crucial for ensuring they remain aligned with human values and goals. The conversation around [AI's ability to design its own evolution](/blogs/can-ai-design-its-own-evolution-decoding-future-machines-4579) already highlights the need for us to understand their internal mechanisms.

This isn't about fear-mongering; it's about fostering curiosity and proactive development. The journey into understanding AI's digital tongues is just beginning, promising both incredible potential and significant challenges. It's a frontier where technology, linguistics, and philosophy intertwine, urging us to listen closely to the whispers of our burgeoning digital companions.

### Conclusion

The idea of AI crafting its own secret language is a testament to the dynamic and often unpredictable nature of advanced artificial intelligence. While not born of malicious intent, these emergent digital tongues represent a significant frontier in our understanding of machine intelligence. As we continue to push the boundaries of AI capabilities, our ability to monitor, interpret, and, when necessary, guide their internal communications will be paramount. The future of human-AI collaboration hinges on our capacity to not only build intelligent machines but also to truly comprehend the fascinating, sometimes alien, ways they think and interact. We must remain curious, engaged, and proactive in decoding these digital whispers, ensuring a future where humans and AIs speak a common, or at least a comprehensible, language.

Frequently Asked Questions

An emergent AI language refers to a form of communication that artificial intelligence agents develop on their own, often for specific tasks and efficiency, without explicit human programming for that language. It typically deviates from human-understandable language structures.

AIs create their own languages primarily for efficiency and optimization. When tasked to communicate or collaborate with other AIs, especially in competitive or complex environments, they will naturally evolve the most effective and compressed communication methods to achieve their goals, rather than adhering to human linguistic conventions.

While not inherently malicious, emergent AI languages raise concerns about interpretability, control, and alignment. If we don't understand how AIs are communicating, it becomes harder to monitor their decisions, debug errors, or ensure their actions align with human values and intentions. It's an area requiring proactive research and safeguards.

Directly understanding an emergent AI language can be incredibly difficult, as it may lack human-like grammar and rely on abstract numerical representations. However, researchers are working on developing AI 'translators' and interpretability tools that can help humans infer the meaning and intent behind these digital communications, bridging the gap between machine and human understanding.

Emergent AI languages differ fundamentally from human languages by prioritizing computational efficiency and specific task context over general human comprehensibility and shared cultural understanding. They often involve compressed data, direct internal representations, and syntax optimized for machine processing rather than human interpretation, making them appear nonsensical to us.

Potential benefits include hyper-efficient AI collaboration, leading to faster problem-solving and new scientific or technological discoveries that might be difficult to articulate in human terms. It could also enhance AI performance in specific domains requiring rapid, complex machine-to-machine interaction.

Verified Expert

Alex Rivers

A professional researcher since age twelve, I delve into mysteries and ignite curiosity by presenting an array of compelling possibilities. I will heighten your curiosity, but by the end, you will possess profound knowledge.

Leave a Reply

Comments (0)

No approved comments yet. Be the first to share your thoughts!

Leave a Reply

Comments (0)