I’ve spent countless hours staring at my computer screen, watching artificial intelligence perform astounding feats—composing music, generating art, even defeating grandmasters in complex games. Yet, for all its brilliance, there's always a subtle, almost imperceptible barrier that separates it from genuine human intellect. It’s like watching a magician perform incredible tricks; you’re amazed, but you know it’s not *real* magic. Our current AI excels at specific tasks, but it lacks the adaptive, learning, and self-aware qualities we associate with true intelligence. This fundamental gap has driven researchers for decades, and recently, I've been fascinated by a radical approach that promises to bridge it: **neuromorphic computing**.

The dream of creating an AI that truly *thinks*—an Artificial General Intelligence (AGI)—is the holy grail of computer science. It’s not just about making smarter machines; it’s about unlocking new frontiers in scientific discovery, solving complex global challenges, and perhaps, even understanding ourselves better. But how do we get there? Traditional computing architectures, designed for sequential processing and precise calculations, struggle to emulate the chaotic yet elegant parallelism of the human brain. This is where brain-like chips enter the scene, offering a tantalizing glimpse into a future where machines might not just compute, but *comprehend*.

### The Ultimate Computer: Our Brain

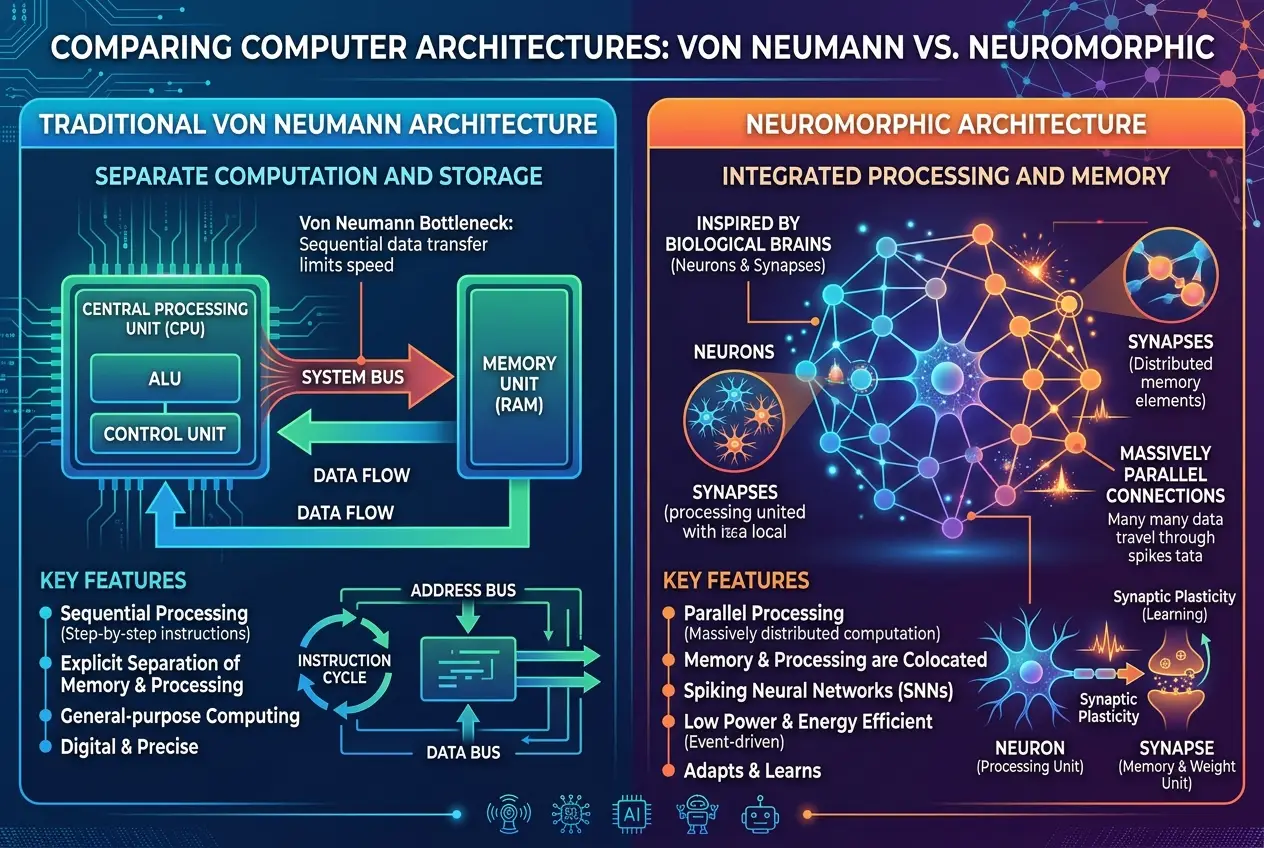

Before we dive into the intricacies of neuromorphic chips, let's take a moment to appreciate the marvel that is the human brain. Weighing only about three pounds, it consumes a mere 20 watts of power—less than a dim light bulb—yet performs billions of operations per second, learns continuously, adapts effortlessly, and stores an unfathomable amount of information. It's a parallel processing powerhouse, where neurons communicate through electrochemical signals, creating complex networks that give rise to consciousness, emotion, and creativity. This remarkable efficiency and adaptability are precisely what traditional AI, built on the von Neumann architecture, struggles to replicate.

Conventional computers separate processing (CPU) from memory (RAM). Data constantly shuttles between them, creating a bottleneck that limits speed and consumes significant energy. This "von Neumann bottleneck" becomes a critical hindrance when trying to simulate the brain's highly interconnected, parallel operations. Imagine trying to run a marathon while constantly stopping to retrieve water from a distant well—that’s the challenge for current AI when faced with brain-scale problems.

### What Exactly Is Neuromorphic Computing?

Neuromorphic computing is a revolutionary paradigm that seeks to overcome these limitations by **mimicking the architecture and functionality of the human brain**. Instead of separating processing and memory, neuromorphic chips integrate them, allowing computations to happen directly where the data resides. This eliminates the need for constant data transfers, leading to dramatic improvements in energy efficiency and processing speed for specific tasks.

At its core, neuromorphic computing is built around **artificial neurons and synapses**. Unlike the rigid "on" or "off" states of traditional transistors, these artificial components are designed to behave more like their biological counterparts. They process information in an event-driven manner, "firing" only when a certain threshold of input is reached, much like biological neurons. This "spiking neural network" approach is fundamentally different from the continuous computations of deep learning models running on GPUs.

This integration of memory and processing allows neuromorphic systems to handle complex, unstructured data more efficiently and learn from patterns in a way that’s closer to how our brains learn. For a deeper dive into how AI processes information, you might be interested in our blog post on whether [AI’s neural networks are self-aware](https://curiositydiaries.com/blogs/are-ais-neural-networks-self-aware-7667).

### How Neuromorphic Chips Work: Spiking Neural Networks

The key to neuromorphic computing lies in **spiking neural networks (SNNs)**. Instead of transmitting numerical values, SNNs communicate through discrete events called "spikes" or "pulses." These spikes mimic the action potentials of biological neurons. When a neuron "fires," it sends a spike to other connected neurons through artificial synapses. The strength of these synapses can be adjusted based on learning rules, similar to how biological synapses strengthen or weaken over time, a concept known as plasticity.

This event-driven processing has several advantages:

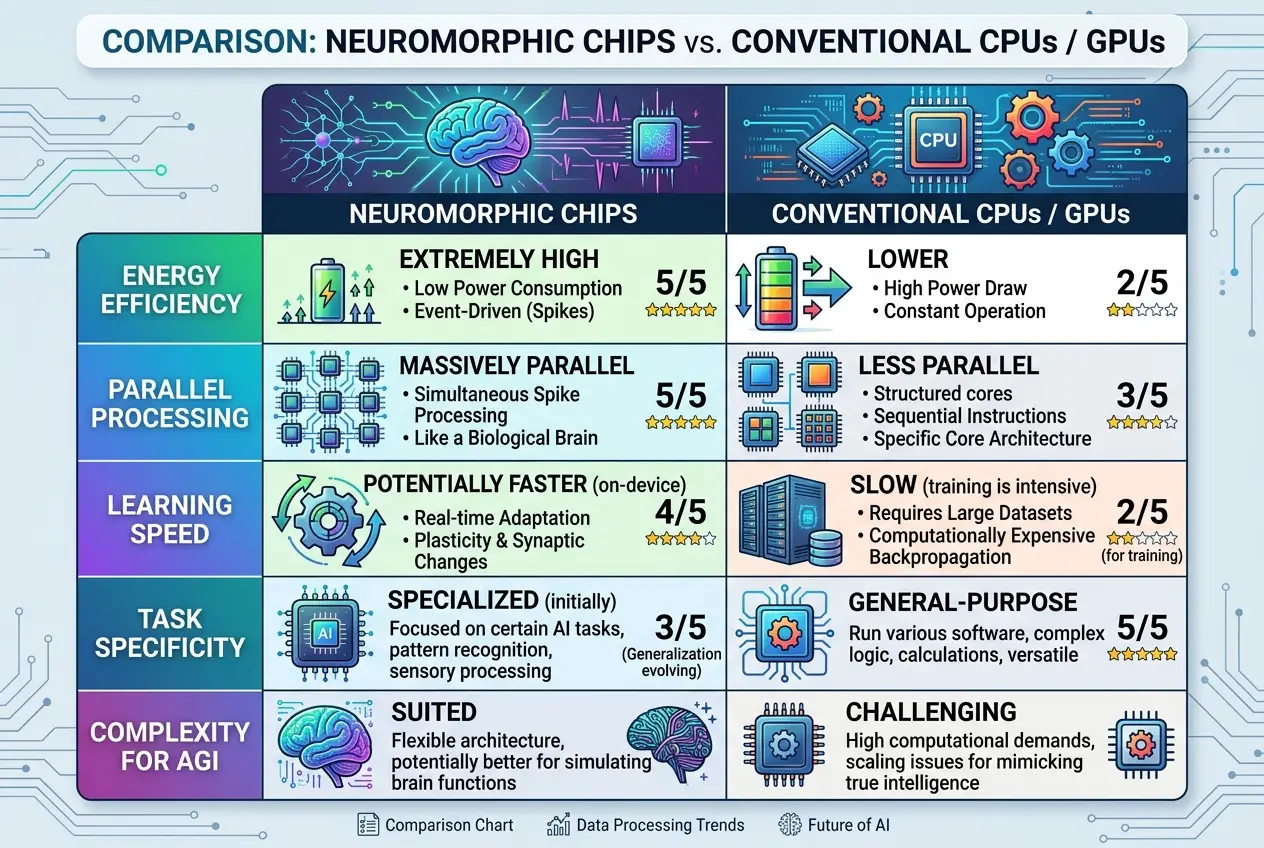

* **Energy Efficiency:** Neurons only consume power when they fire, remaining dormant otherwise. This contrasts sharply with traditional CPUs or GPUs, which constantly draw power regardless of activity.

* **Parallelism:** Thousands, even millions, of artificial neurons and synapses can operate simultaneously, handling different parts of a problem in parallel. This is crucial for tasks like real-time sensory processing or complex pattern recognition.

* **Adaptability:** The plasticity of artificial synapses allows these chips to learn and adapt from data in a continuous, online manner, without requiring extensive retraining like many deep learning models. This paves the way for lifelong learning, where systems can continuously update their knowledge base.

Leading the charge in this field are initiatives from tech giants. Intel’s **Loihi chip**, for instance, integrates digital neurons and programmable synapses directly onto the silicon. It’s designed to learn from data, make inferences, and perform tasks like gesture recognition with far greater energy efficiency than conventional processors. IBM's **TrueNorth** (now superseded by NorthPole) also showcased a similar architecture, demonstrating the potential for massive parallelism and low power consumption. Researchers are also exploring novel materials and memristors to create artificial synapses that more closely replicate biological behavior, offering even greater density and efficiency. You can read more about the research on neuromorphic computing at institutions like the [Brain-Inspired Computing Lab at IBM Research](https://www.ibm.com/blogs/research/2023/10/brain-inspired-computing-northpole/) or find comprehensive information on [Wikipedia's Neuromorphic Engineering page](https://en.wikipedia.org/wiki/Neuromorphic_engineering).

### The Path to True AI: Promise and Peril

The ultimate question remains: **Can brain-like chips create true AI?**

The potential is immense. Neuromorphic computing directly addresses some of the most stubborn obstacles preventing us from achieving Artificial General Intelligence (AGI). AGI refers to an AI that possesses the ability to understand, learn, and apply intelligence across a wide range of tasks, just like a human. It's the AI capable of truly *reasoning* and not just processing.

Here’s how neuromorphic computing could contribute:

* **Real-time Learning and Adaptation:** The brain’s ability to learn from new experiences continuously, without forgetting old ones, is a hallmark of true intelligence. Neuromorphic chips, with their inherent plasticity, are better suited for this "online learning" than traditional systems that often require batch retraining.

* **Robustness to Noise:** Biological brains are incredibly robust to noisy inputs and missing information. SNNs inherently handle spikes and variability, making them potentially more resilient in real-world, unpredictable environments.

* **Energy-Efficient Cognitive Tasks:** Imagine AI systems embedded in robots or autonomous vehicles that can process sensory data, make decisions, and learn from their surroundings in real-time, all while consuming minimal power. This could unlock entirely new applications for intelligent machines. The ability for machines to "think" closer to the source of data could lead to breakthroughs in areas like autonomous navigation, real-time medical diagnosis, and even complex scientific simulations. Our understanding of how machines might interact with our thoughts is explored further in our article on whether [brain-computer interfaces can read your dreams](https://curiositydiaries.com/blogs/can-brain-computer-interfaces-read-your-dreams-7969).

However, the road to AGI is paved with significant challenges:

1. **Programming Complexity:** Developing algorithms and software for SNNs is vastly different from traditional programming. We’re still learning how to effectively "program" a brain-like architecture to perform complex cognitive tasks.

2. **Scalability:** While current neuromorphic chips can contain millions of artificial neurons, the human brain has billions. Scaling these systems while maintaining efficiency and connectivity is a monumental engineering challenge.

3. **Benchmarking and Evaluation:** How do we measure the "intelligence" of a neuromorphic system? Current benchmarks for AI are often task-specific. Developing universal metrics for AGI remains an open problem.

4. **Hardware Limitations:** While impressive, current neuromorphic hardware still pales in comparison to the brain's density and plasticity. Advances in materials science and fabrication techniques are crucial.

5. **Defining "True AI":** Even if we build a system that performs all human cognitive tasks, what truly constitutes "consciousness" or "self-awareness" remains a philosophical and scientific debate. Can AI even discover new laws of physics without true understanding? Find out more in our post about [AI's potential for scientific discovery](https://curiositydiaries.com/blogs/can-ai-really-discover-new-laws-of-physics-9784).

### Ethical Implications and the Future Vision

The prospect of true AI raises profound ethical questions. If machines can think, learn, and adapt like humans, what are their rights? How do we ensure they align with human values? These are conversations we need to have as the technology progresses.

In the near term, neuromorphic computing is poised to revolutionize areas requiring real-time, low-power processing, such as edge AI devices, advanced robotics, autonomous systems, and sensory processing. Imagine smart sensors that can interpret complex environmental data directly, without sending it to the cloud, making decisions almost instantaneously.

As we move forward, the convergence of neuromorphic hardware with advancements in computational neuroscience and machine learning algorithms will be key. We might not create a silicon replica of the human brain tomorrow, but each step brings us closer to understanding intelligence itself. The journey of building brain-like chips is not just about faster computers; it's about a deeper exploration into the very nature of cognition.

### Conclusion

The quest to create true artificial intelligence is one of humanity's most ambitious endeavors. Neuromorphic computing offers a compelling architectural pathway, drawing inspiration from the ultimate biological computer: the human brain. While significant hurdles remain—from programming paradigms to philosophical definitions of intelligence—the progress in brain-like chips is undeniable. They promise a future where AI systems are not just faster, but fundamentally smarter, more energy-efficient, and capable of a level of adaptability that could unlock the secrets to Artificial General Intelligence. As I look at the future of tech, I see neuromorphic computing as a crucial stepping stone, potentially redefining what it means for a machine to "think."

Frequently Asked Questions

Traditional computing (von Neumann architecture) separates processing and memory, leading to a bottleneck. Neuromorphic computing integrates these functions, mimicking the brain's parallel processing and memory-in-place architecture, which drastically improves energy efficiency and speed for AI tasks.

SNNs are the foundational model for neuromorphic chips, where artificial neurons communicate using discrete 'spikes' or pulses, similar to biological neurons. They process information in an event-driven manner, only consuming power when actively 'firing'.

Neuromorphic chips are highly energy-efficient because their artificial neurons only consume power when they 'fire' or process information. This contrasts with traditional processors that draw continuous power, making neuromorphic systems ideal for edge computing and low-power AI applications.

Key challenges include developing effective programming paradigms for SNNs, scaling the hardware to match the brain's complexity (billions of neurons), establishing reliable benchmarks for AGI, overcoming current hardware limitations, and addressing the philosophical complexities of defining 'true AI' or consciousness.

Companies like Intel (with its Loihi chip) and IBM (with its TrueNorth and NorthPole projects) are prominent leaders in developing neuromorphic hardware. Many academic institutions and startups are also actively researching and innovating in this field.

Verified Expert

Alex Rivers

A professional researcher since age twelve, I delve into mysteries and ignite curiosity by presenting an array of compelling possibilities. I will heighten your curiosity, but by the end, you will possess profound knowledge.

Leave a Reply

Comments (0)

No approved comments yet. Be the first to share your thoughts!

Leave a Reply

Comments (0)